A 107-Page Review of RAG and Agent & LLM Memory

Today, I'm sharing a 107-page technical review from Renmin University, Fudan University, Peking University, and others titled "Memory in the Age of AI Agents: A Survey Forms, Functions and Dynamics."

Project Address: https://github.com/Shichun-Liu/Agent-Memory-Paper-List

Paper Address: https://arxiv.org/pdf/2512.13564

Over the past two years, we have witnessed the amazing evolution of Large Language Models (LLMs) into AI Agents. From Deep Research to software engineering, from scientific discovery to multi-agent collaboration, these foundation model-based agents are pushing the boundaries of Artificial General Intelligence (AGI).

But a core question emerges: How can agents possess continuous learning and adaptation capabilities when static LLM parameters cannot be quickly updated?

The answer is -- Memory.

"Memory is the key capability to transform static LLMs into agents capable of continuous adaptation through environmental interaction."

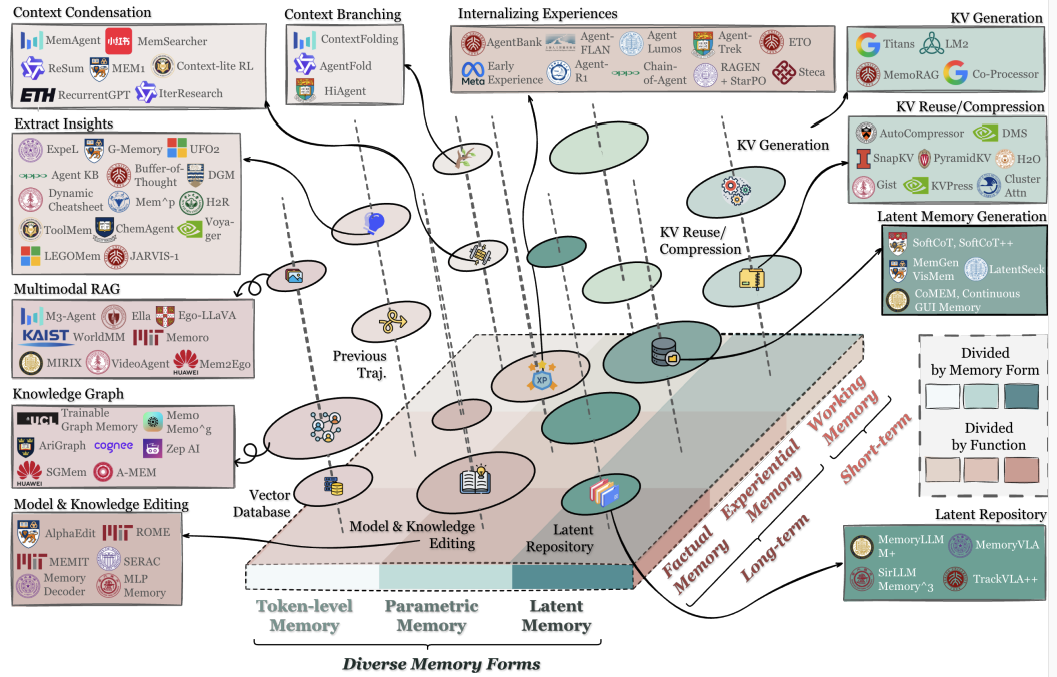

Figure 1 shows the unified classification framework proposed in the paper, organizing agent memory according to three dimensions: Forms, Functions, and Dynamics, and mapping representative systems into this classification system.

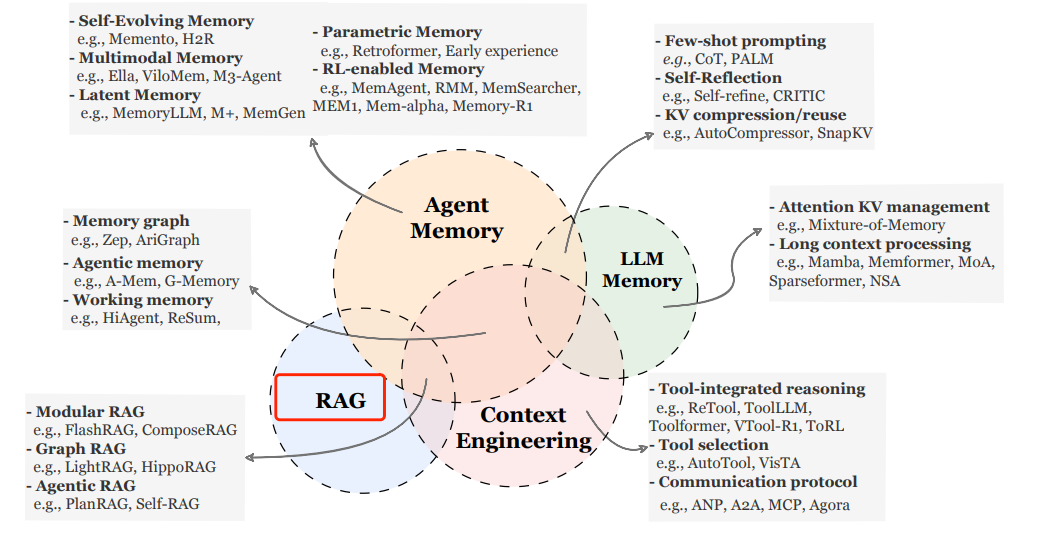

The paper also clearly distinguishes Agent Memory from several closely related but fundamentally different concepts: LLM Memory, Retrieval-Augmented Generation (RAG), and Context Engineering. Although they are all related to the storage and utilization of information, there are key differences in goals, mechanisms, and application scenarios.

Agent Memory Technology

-

Self-Evolving Memory: Memento, H2R

-

Multimodal Memory: Ella, ViloMem, M3-Agent

-

Latent Memory: MemoryLLM, M+, MemGen

-

Parametric Memory: Retroformer, Early experience

-

RL-enabled Memory: MemAgent, RMM, MemSearcher, MEM1, Mem-alpha, Memory-R1

Agent Memory vs. RAG

RAG related technologies:

-

Modular RAG: FlashRAG, ComposeRAG

-

Graph RAG: LightRAG, HippoRAG

-

Agentic RAG: PlanRAG, Self-RAG

Both RAG and agent memory involve retrieving information from external storage to enhance model capabilities, but there is a fundamental difference in their design philosophy:

FeatureRAGAgent Memory Core ObjectiveProvide relevant background knowledge support for the current querySupport continuous learning and adaptive behavior over time Information SourceUsually static, pre-built knowledge baseDynamically generated, personalized information from the agent's own interaction experience Retrieval TriggerPassively triggered by user queriesActively determined by the agent when and what to retrieve Information UpdateKnowledge base is usually updated offlineUpdated online, continuously, and selectively Feedback LoopNo direct feedback mechanismForms a closed loop with environmental interaction

Key Difference: RAG is a knowledge expansion tool, while agent memory is a learning mechanism. RAG answers "What do I know", and agent memory answers "What have I learned".

Agent Memory vs. LLM Memory

LLM memory related technologies:

-

Attention KV management: Mixture-of-Memory

-

Long context processing: Mamba, Memformer, MoA, Sparseformer, NSA

DimensionLLM MemoryAgent Memory DefinitionInternalized knowledge in model parameters, or temporary information in the context windowExternal system that supports continuous interaction between agents and the environment, cross-task learning, and long-term adaptation Time ScaleLimited to pre-training data or the current dialogue contextSpans multiple tasks and sessions, supporting lifelong learning UpdatabilityParameter updates are costly, and context information is volatileSupports efficient, selective dynamic updates and evolution ProactivenessPassively responds to queriesActively decides what information to store, update, and retrieve Coupling with the EnvironmentNo direct interaction with the environmentDeeply integrates environmental feedback, supporting interactive learning

Key Difference: LLM memory is essentially static (parameters are fixed) or transient (context is limited), while agent memory is dynamic, persistent, and environmentally coupled.

Agent Memory vs. Context Engineering

Context engineering related technologies:

-

Tool-integrated reasoning: ReTool, ToolLLM, Toolformer, VTool-R1, ToRL

-

Tool selection: AutoTool, VisTA

-

Communication protocol: ANP, A2A, MCP, Agora

AspectContext EngineeringAgent Memory FocusInput optimization for a single round or the current taskPersistence and utilization of information across multiple rounds and tasks Time DimensionCurrent sessionLong-term history Information SelectionManually designed or heuristic rulesAutomated formation, evolution, and retrieval mechanisms State ManagementNo persistent stateExplicitly maintains an evolvable memory state

Key Difference: Context engineering is a prompt optimization technique, while agent memory is a state management system. The former focuses on "What to input now", and the latter focuses on "What was remembered in the past and how it affects the present and future".