Agent Bucket: Trillion-Scale Agent Native Storage Bucket

Agent Bucket: Trillion-Scale Agent Native Storage Bucket

As AI Agents spring up like mushrooms after rain, developers are building imaginative intelligent applications at an unprecedented rate. From programming assistants that help you write code to creative tools that generate a movie from a single sentence, to personal intelligent assistants that are always on standby, Agents are reshaping the way we interact with the digital world. Behind this wave, a consensus is becoming increasingly clear: with the help of Serverless architecture (such as Lambda), large language models (LLM), and cloud storage (such as S3, TOS), combined with Vibe Coding, anyone can quickly build their own AI Agent in 30 minutes.

From "usable" to "easy to use", Agent developers still need to overcome difficulties in transitioning from "toys" to "production-level applications". As businesses move towards massive users, developers have to face an extremely complex challenge: how to build a complete storage solution for massive end-users on object storage? For most developers, this is not only a technical threshold but also a gap that hinders the large-scale distribution of Agents. Agent Bucket aims to completely simplify the construction process of multi-tenant systems through AI-native storage design and provide more friendly Agent capabilities.

When Hundreds of Millions of Users Flood In, Traditional Object Storage is "Not Enough"

Imagine you've developed a wildly popular AIGC application. Each user will generate and store a large number of pictures, videos, and temporary files. As a developer, you will naturally choose mature and scalable object storage services like S3 and TOS. But here's the problem: how do you manage data for massive users?

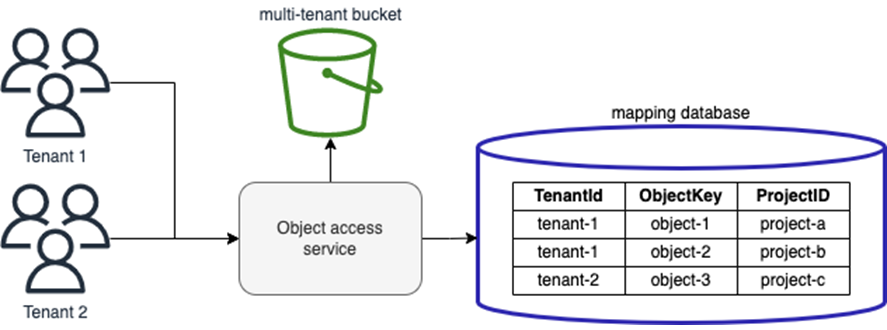

The 2022 S3 blog post "Partitioning and Isolating Multi-Tenant SaaS Data with Amazon S3" describes two methods: "using a separate S3 bucket for each tenant" and "sharing an S3 bucket based on prefix isolation":

- Create a separate "bucket" for each user: This is feasible when the number of users is small, but when the number of users grows to tens of thousands or millions, the number of buckets will explode rapidly, and management costs and resource limitations will become unbearable. S3 provides a total of 10,000 bucket quotas for the entire region, but for popular AI capabilities, 10,000 is far from enough.

- Use "prefix" to distinguish users in the same bucket: This has become the mainstream solution. For example, user A's files all start with user-a/, and user B's files start with user-b/, just like managing files with folders on a computer. However, object storage does not have native folders. This solution distinguishes multi-tenants through "common prefix" in the "K-V" storage system.

This "bucket" or "prefix"-based solution has been widely adopted in the past decade. But there are the following problems:

-

Multi-tenant isolation: All user data is mixed in the same bucket. An abnormal high-frequency access by one user may affect all other users, resulting in a "neighbor effect". Performance isolation and fault isolation are out of the question.

-

Permission control: Complex permission policies (IAM Policy) are difficult to maintain, and configuration errors can easily occur, resulting in accidental access to user data, especially when interacting with other cloud services, the risk exposure is greater.

-

Cost clarity: It is difficult for you to accurately know how much storage space each user consumes and how much traffic costs are generated. When you want to charge paying users based on usage, billing and metering become a mess.CRITICAL: You MUST translate ALL Chinese text to English. DO NOT keep any Chinese characters in your response. Every word must be in English.

The content contains HTML tags; keep tags unchanged.

Keep code blocks and technical terms unchanged, but translate all comments and explanations.

Return valid JSON in this exact format: {"title":"...","content":"..."}

Do not use markdown or code blocks around the JSON.

Title:

Content: Why do Agent developers find implementing seemingly basic requirements on object storage somewhat "heavy"? A deep dive into the reasons reveals that in the current cloud-native architecture, there's a significant gap between "object storage" like S3 and traditional "file systems." The essence of object storage (S3/TOS) is "flattened," designed for simple storage of massive data, like a giant warehouse. While its capacity is nearly unlimited, its logical structure is extremely simple. It lacks native advanced directory management, fine-grained metadata control, and true tenant awareness. When developers try to simulate a "three-dimensional" multi-tenant file system on the "flat" S3 by hardcoding prefixes, we are actually using a "static KV storage" to bear the file access method of an Agent application with "directory semantics and strong isolation." That is, the Agent needs to consume extra tokens to manage files and control the resolution of multi-tenant permissions and isolation. These extra token consumptions all indicate that S3's defined simple storage service is not simple enough for the Agent.

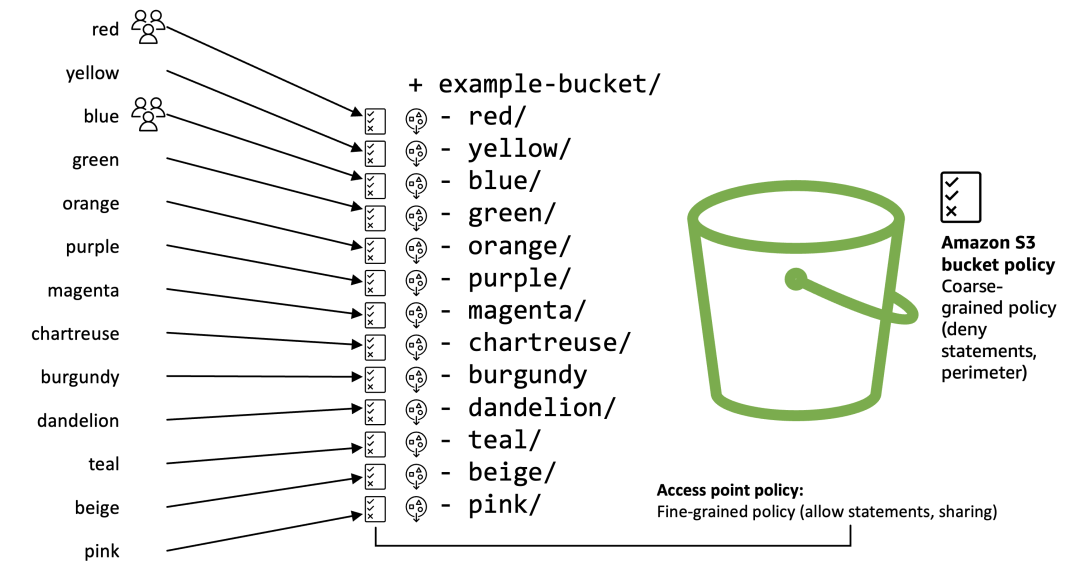

The 2025 S3 blog post, "Design patterns for multi-tenant access control on Amazon S3," further elaborates on S3 Access Points. This means that multiple virtual network access points can be created, and each access point can be configured with a customized access point policy, providing some solutions at the network scheduling level for multi-tenant scenarios.

Agent Wonderland

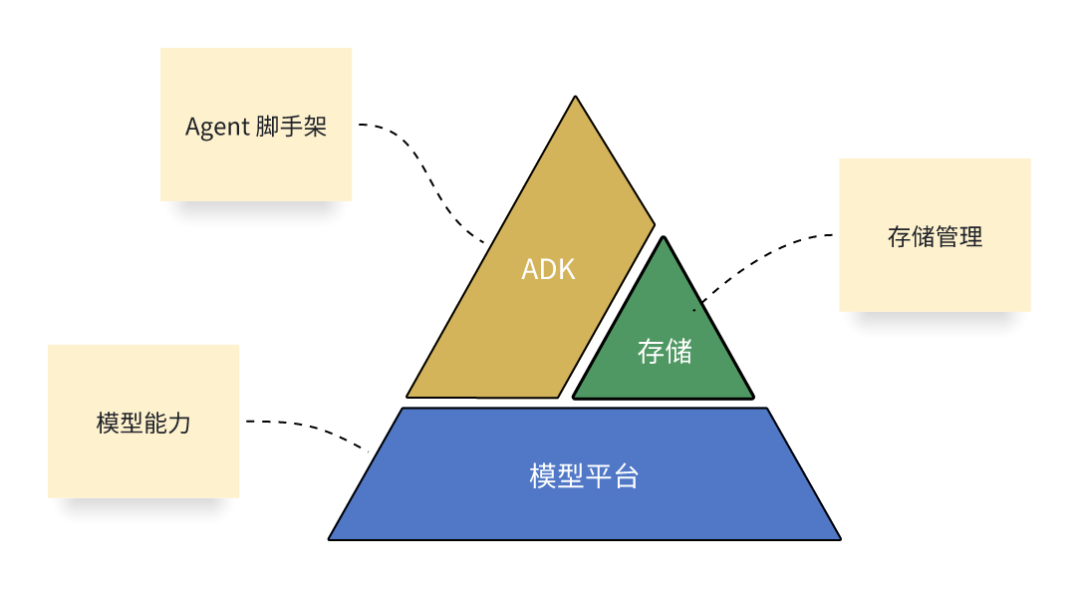

Ideally, an Agent developer, when developing an AI Agent, can build a completely serverless Agent based on "Agent SDK + Storage + MaaS service":

-

Agent can run completely serverless

-

Agent can be built by combining existing product capabilities through Vibe Coding

-

Only the "ADK" python script needs to be maintained

-

Object storage is used for storage

-

Doubao is used for AI capabilities

-

Theoretically, there are no ECS or other instance-based products

At the same time, the storage needs to provide the following capabilities:

-

Agent can have object-semantic storage (to save files), providing multi-tenant access capabilities, starting at a million level and scalable to a billion level

-

Agent can provide independent space for each user (there may be duplicate names between multiple businesses, business units, or UIDs)

-

Agent can directly configure the bandwidth for each user and configure the total size limit of user objects

-

Agent can bill, monitor, and observe according to the user

-

Agent can configure access policies for each user's files

Agent Bucket: Injecting "Native Multi-Tenancy" Genes into AI Agents

To fundamentally solve this problem, we propose a new object storage paradigm—Agent Bucket. Its core innovation is the introduction of a new native resource level between the traditional "bucket" and "object": object collection.

The core idea of this design is extremely simple: match each of your end users with an exclusive ObjectSet. You can think of ObjectSet as a "data safe" or "cloud personal space" tailored for each user. It logically belongs to your (developer's) Bucket, but physically and managerially, it has its own independent "personality" and "lifecycle."Each bucket in Agent Bucket supports 100 million ObjectSets, which means you can easily serve hundreds of millions of end-users, as if each end-user "lives" in their own independent storage space, without having to worry about multi-tenant storage management.

ObjectSet Design - Agent-Friendly Capabilities

In Agent Bucket, ObjectSet is not just adding a level, but also turning the most difficult requirements in multi-tenant scenarios into out-of-the-box native capabilities. When data ownership is clearly defined at the ObjectSet level, a series of capabilities that were difficult to achieve in the past become natural.

-

Native Isolation: At the ObjectSet level, you can set independent QPS, bandwidth limits, and capacity quotas for each user. The experience of paying users can be guaranteed, and the abnormal behavior of free users will not affect others. This is true fault domain isolation, so that "neighbors" no longer interfere with each other.

-

Native Permissions: Each ObjectSet can have an independent domain name. This means that you can give user A an exclusive access address of user-a.yourapp.com, instead of exposing the domain name of the entire storage bucket. Even more ingenious is the "two locks" design: the first lock is a temporary access credential (STS) issued by the cloud service provider, which controls access permissions at the application level; the second lock is the independent domain name of the ObjectSet, which locks the access request to the user's own data space from the network level. This greatly improves data security.

-

Native Monitoring: On the monitoring dashboard, you can no longer only see the overview data of the entire bucket. You can decompose the monitoring chart by-ObjectSet to clearly understand which end-user is performing a large number of accesses, so as to make accurate operation and optimization decisions.

-

Native Capability Downgrade: Policies that could only be set at the bucket level in the past can now be downgraded to each user. You can set different data lifecycles for different levels of users, or use different encryption keys for each ObjectSet to achieve more refined and secure data management.

-

Native Metering: Want to know how much storage space each user occupies? Want to accurately allocate storage costs to each user? Now it becomes easy. Agent Bucket will automatically count the capacity and usage of each ObjectSet for you, making your billing and accounting clear.

-

Native Billing: Developers can easily achieve cost allocation and accurately push the storage costs back to each end-user. For example, charge different users according to the actual cost ratio generated by different users A, B, and C, providing data support for the commercialization of Agent.

-

Native Capacity Limit: In order to control the operating costs of Agent, you can set a Quota (capacity limit) for each ObjectSet. Once the preset value is reached, the system will restrict the user from continuing to generate new files, fundamentally avoiding resource abuse in multi-tenant scenarios.

-

Native Intelligence: Agent Bucket allows Agent to jump out of the limitations of traditional file simple "access", giving Object native intelligence, and more efficiently supporting Agent one-stop development. ObjectSet can enable intelligent indexing with one click, providing Agent with native-friendly multi-modal question answering capabilities, replacing the mechanical operation of traditional Object CRUD; it even supports one-click enabling of Agentself mode, connecting vectors, knowledge, models and prompts, directly exposing scene-based sub-Agent functions, allowing upper-level Agent developers to focus on the creation of main business workflows, and fully release intelligent monetization efficiency.

Technical Challenges Brought by the Explosive Growth of Application Scale

Agent Bucket provides application developers with an elegant and efficient way to manage hundreds of millions of end-user data by introducing the native concept of ObjectSet. Each user's digital assets are securely stored in their exclusive ObjectSet, naturally realizing isolation, billing and quota management.

With the rapid expansion of application scale, the management complexity, isolation difficulty, and physical bottlenecks of massive Sets are all emerging at the same time:

-

Massive User Hierarchical Management Problem: When applications need to differentiate and manage the resources and characteristics of a large number of different levels of users, they need to design and implement the hierarchical metadata of users themselves, and associate object storage feature switches. Helping developers elegantly manage user hierarchies on the native concept of Set is important to accelerate application landing.- Single Cluster Capacity Bottleneck: Although the Agent Bucket can be logically scaled infinitely, its metadata is stored in a single physical cluster by default. When the total number of objects in the bucket reaches hundreds of billions or even trillions, the physical capacity of a single cluster becomes an insurmountable limit.

-

Access Point Sharing Problem: The diversity of Agent's business and the massive number of users bring greater security risks and blast radius to the access point itself. How to perform dynamic scheduling based on the differences of a large number of different businesses and users, and achieve differentiated security, isolation, and acceleration capabilities has become a difficult point.

Set Tagging: Tag-Based Management for User Tiering

ObjectSet provides native tag-based management, allowing Agent developers to easily use set tagging capabilities to complete user tiering management. Developers can define a tag for each defined user level and enable different quotas and features for each tag. All ObjectSets tagged with this tag will apply the corresponding quotas and features. Taking V1, V2, and V3 levels as examples:

-

V1: Default level, free users, the default tag for all ObjectSets, can be configured to store up to 1GiB of data, public network distribution cannot exceed 100mbps bandwidth, and single-stream download speed is controlled at 1mbps;

-

V2: Entry-level paid members, configured to store up to 10GiB of data, public network distribution cannot exceed 10gbps bandwidth, and single-stream download speed is controlled at 10mbps;

-

V3: Advanced paid members, in addition to providing larger storage and public network distribution quotas, also support configuring additional public network weak network acceleration and high-performance media acceleration capabilities;

Agent developers can flexibly use V1/V2/V3 tagging to manage the resources and value-added features that these users can use for different development cycles of different users.

Set Slice: Native Isolation of Massive User Data

When the number of Sets in an Agent Bucket reaches hundreds of millions and the number of objects reaches hundreds of billions or trillions, the fact that "all metadata of a single Bucket is concentrated in one KV cluster" itself brings dual risks of capacity and performance.

Set Slice provides a "logically undivided, physically split" approach:

-

From a logical point of view, you still only manage one Agent Bucket.

-

Physically, the metadata is divided into multiple Slices based on the range of Set and object names within the Set. Each Slice can be stored on different clusters, with natural isolation between multiple Sets and horizontal scaling of a single Set.

Set Slice is a further extension and guarantee of ObjectSet capabilities. It solves the problem of unlimited physical capacity expansion at the bottom layer, while ensuring the stability and consistency of the upper-layer ObjectSet management model.

-

Stable Management Boundary: Even if the data of an Agent Bucket spans multiple physical clusters, ObjectSet is still the only basic unit for permissions, quotas, billing, and monitoring. The policies configured by developers for ObjectSet (such as access control, capacity limits) will automatically take effect on all related Slices, without having to worry about the distribution of underlying data.

-

Single Set Can Be Scaled Linearly: When the data volume of a certain ObjectSet grows rapidly, its data will naturally be distributed to multiple Slices. As the overall cluster expands, the capacity of the ObjectSet also grows seamlessly and linearly, and developers do not need to perform any destructive operations such as splitting or migrating the ObjectSet itself.

-

Cross-Set Resource Isolation: By distributing objects in different ranges to different physical clusters, SetSlice achieves higher-dimensional resource isolation. Combined with ObjectSet's quota management, it can effectively prevent the data growth of a certain "super user" ObjectSet from squeezing all the resources of a single cluster, thereby affecting the stability of other ObjectSets, and making the overall capacity risk controllable.- Logical Unification and Compatibility: For businesses and developers, regardless of how many underlying Slices there are, they always face a logically unified Agent Bucket. All operations on buckets, ObjectSets, and objects remain the same, achieving complete transparency of physical expansion to upper-layer applications.

Set AccessPoint: Isolating Access Points for Each User

Agent Bucket supports enabling independent access points (independent domain names) for each ObjectSet, and expanding differentiated security, isolation, and acceleration capabilities on the access points. The system needs to support scheduling and differentiated configuration capabilities for hundreds of millions of independent access points.

Independent access domain name {$apid}.tos-objectset-ap.volces.com: Two-level security protection

-

First level Obscurity: Independent subdomains By User/ObjectSet, apid high entropy hash, extremely low collision probability, it is impossible to guess and exhaust specific user entry points from the access domain name perspective;

-

Second level Containment: Agent developers use sts to distribute ObjectSet-level access permissions. Even if sts is leaked, the access scope can be controlled to be limited to a certain ObjectSet within a limited validity period;

Heuristic Scheduling System: Hundreds of millions of domain name scheduling strategy calculations

-

Differentiated access strategies By user/ObjectSet:tag

-

Multiple users/ObjectSets are automatically scattered at different public network entry points, and the number of users affected by a single entry point failure is controlled

-

Full regional elastic scheduling, any single entry point failure/overload automatically completes traffic boxing and relocation

-

Public network acceleration distribution users, tag public network transmission acceleration, automatically schedule acceleration entry

-

Public network risk users, tag risk, automatically schedule public network isolation entry, and reduce public network bandwidth quota

-

Internal network cross-domain users, tag cross-domain, automatically schedule internal network dedicated line acceleration path

-

Local domain accelerator users, tag accelerator, automatically mount local domain accelerator

From Programming Assistant to AI Cloud Drive, the Infinite Possibilities of Agent Bucket

Agent Bucket provides a complete solution for Agents, and the application scenarios of ObjectSet are far more than that. It can be easily extended to all applications that need to provide services to massive terminal users:

-

Code Repository: In the past, when enterprises or individuals hosted code in the cloud, they often needed to build a "tenant system" on top of object storage to achieve account isolation and permission control. Now, you can assign an exclusive ObjectSet to each developer to uniformly store code repositories, build artifacts, and dependencies. Agent Skills are also naturally adapted to ObjectSet. Skills upload, download and distribution provide strong isolation through ObjectSet to avoid Agent runtime neighbor disturbance.

-

Enterprise Photo Album Cloud Drive: Traditional photo album or cloud drive services often mix all users' photos in the same bucket and distinguish users by prefix, which is not only complicated to manage, but also prone to "neighbor effects." Based on ObjectSet, each user's photos and videos fall into their respective Sets, and access peaks do not interfere with each other. You can also set capacity limits, backup strategies, and encryption methods for each user to truly achieve "everyone has a secure and controllable cloud photo album."

-

Hadoop Data Warehouse: In enterprise data warehouses, different business lines and different databases often share resources on the same underlying storage. By mapping each database to an ObjectSet, enterprises can achieve isolation and quota control by database on top of unified storage. In particular, ObjectSet provides an additional layer of permissions on TOS, providing isolation and permission control for Databases and Tables stored on TOS without changing the existing Proton on TOS.- Model Hosting Platform: In large model hosting scenarios, each model is not only large in size but may also correspond to different versions, weights, and inference configurations. Creating an ObjectSet for each model allows packaging and hosting model weights, Tokenizers, configuration files, and related evaluation data in the same space. The operations team can set differentiated encryption policies, backup policies, and bandwidth control for different models. At the same time, the native metering capabilities can be used to track the actual cost of each model, providing a basis for billing and resource scheduling at the model level.

-

Data SaaS Service: Data distribution platforms facing massive end-users often need to connect to many data providers simultaneously, ensuring clear data boundaries for each party while avoiding the performance risk of "one big bucket dragging everyone down." With Agent Bucket, each data provider can have its own ObjectSet, uniformly managing raw data and processing results. Independent domain names, bandwidth, and QPS quotas can be used to provide differentiated service guarantees and rate limiting for different providers, achieving a data distribution infrastructure of "one platform, multiple providers, isolated yet controllable collaboration."

Reference: