Bombshell Alert! Guide to Unlimited Local Tokens with Claude Code

Claude Code is powerful, but token consumption can be painful!

Finally, Claude Code can work with local models, and the setup is very simple.

The following environment is Mac Mini4. Windows environment is also possible.

In the current era, if you're into desktop AI, it's recommended to get a Mac M-series mini host, such as mini4\mini4 pro\m3 ultra\m4 max, a personal desktop AI powerhouse.

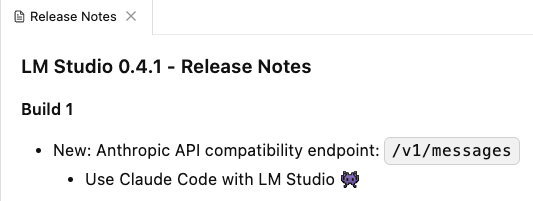

First, you need to upgrade LM Studio to the latest version, which is 0.4.1, because the latest version adds support for Claude Code. (Ollama is also possible)

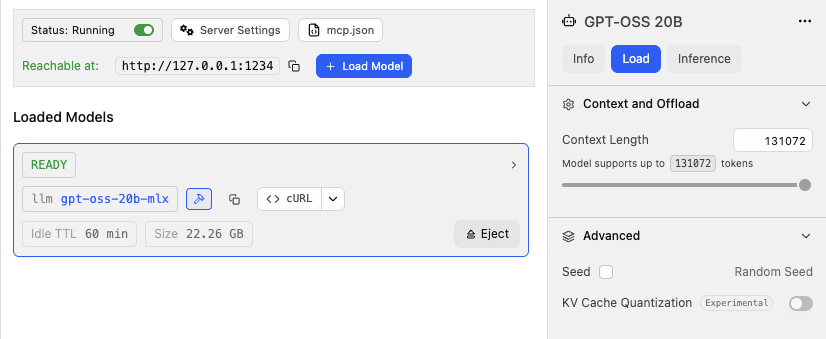

You can run any open-source model locally, as long as your Mac has enough memory. We'll use gpt-oss-20b-mlx as an example, which is an open-source model from OpenAI.

Note one thing: Set the Context length to the maximum, meaning pull the context length to the maximum supported by the model, because the performance of multi-turn tasks with agents heavily depends on the context length; too short won't work. This parameter also needs to be balanced and adjusted based on your Mac's memory and the model's inference speed. Also note: For Mac environments, prioritize downloading models in MLX format, as they infer faster than GGUF format models.

Next, we install claude code in the command line terminal.

Configure environment variables:

export ANTHROPIC_AUTH_TOKEN=lmstudioexport ANTHROPIC_BASE_URL=http://localhost:1234Install the claude code main body:

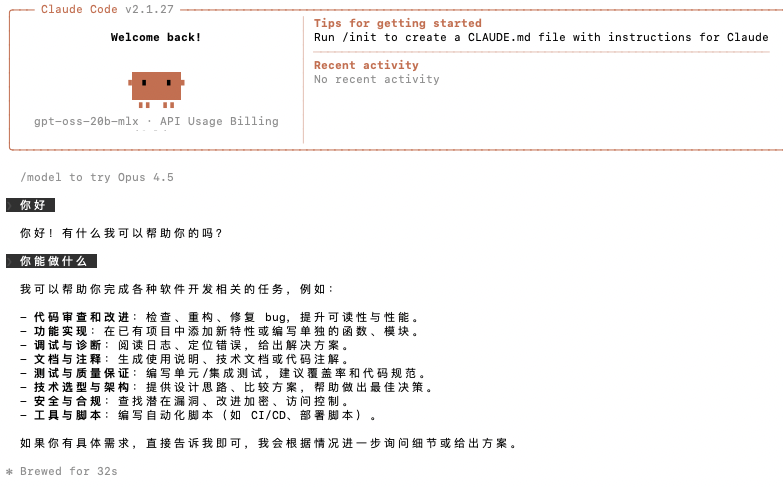

npm install -g @anthropic-ai/claude-codeThen, start claude code:

claude --model gpt-oss-20b-mlxAt this point, claude code will call your local model to output.

In addition to using it in the terminal, it can also be used in VS Code with the following configuration:

In addition to using it in the terminal, it can also be used in VS Code with the following configuration:

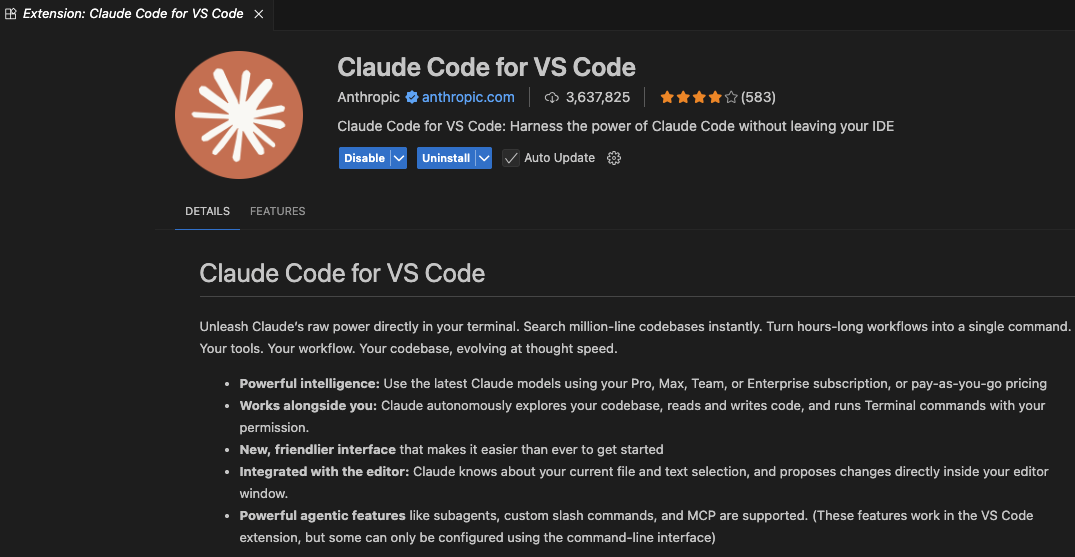

First, we install the Claude Code for VS Code extension.

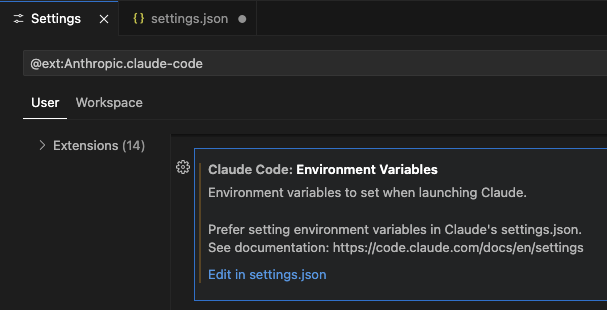

Then set the environment variables:

Then set the environment variables:

{ "claudeCode.environmentVariables": [ { "name": "ANTHROPIC_BASE_URL", "value": "http://localhost:1234" }, { "name": "ANTHROPIC_AUTH_TOKEN", "value": "lmstudio" } ]}Then you can get to work.

Food for thought: Is Claude Code still the same Claude Code without using Anthropic models?

Food for thought: Is Claude Code still the same Claude Code without using Anthropic models?

The gpt-oss-20b-mlx model we use certainly can't compare to Opus 4.5, but if you deploy Kimi K2.5 locally, currently, its capabilities are no less than Opus 4.5.