Breaking Away from the NVIDIA Ecosystem: OpenAI Releases New Programming Model GPT-5.3-Codex-Spark, Speed Reaching 1000 Tokens Per Second

Breaking Away from the NVIDIA Ecosystem: OpenAI Releases New Programming Model GPT-5.3-Codex-Spark, Speed Reaching 1000 Tokens Per Second

Just now, OpenAI released a new programming model that runs on a chip the size of a dinner plate and can output over 1000 tokens per second.

It's called GPT-5.3-Codex-Spark, and it's the first time OpenAI has completely broken away from the NVIDIA ecosystem and deployed a programming model on self-developed hardware.

Core Parameters

- Inference Speed: 1000+ tokens/second

- Latency: First token latency is only 50ms

- Power Consumption: Approximately 100W (equivalent to a light bulb)

- Programming Capabilities: Focused on code generation and understanding

Hardware Architecture

This chip adopts a brand-new architecture design, specifically optimized for Transformer model inference. Compared to traditional GPUs, it significantly improves efficiency when processing autoregressive generation tasks.

Performance Comparison

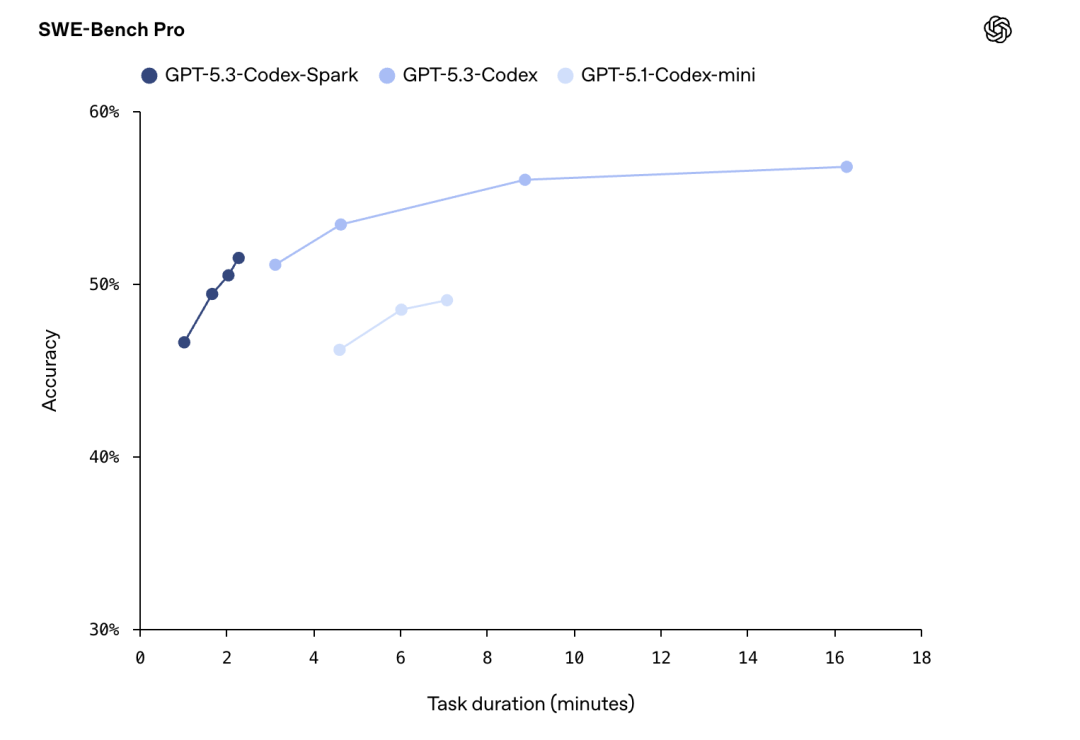

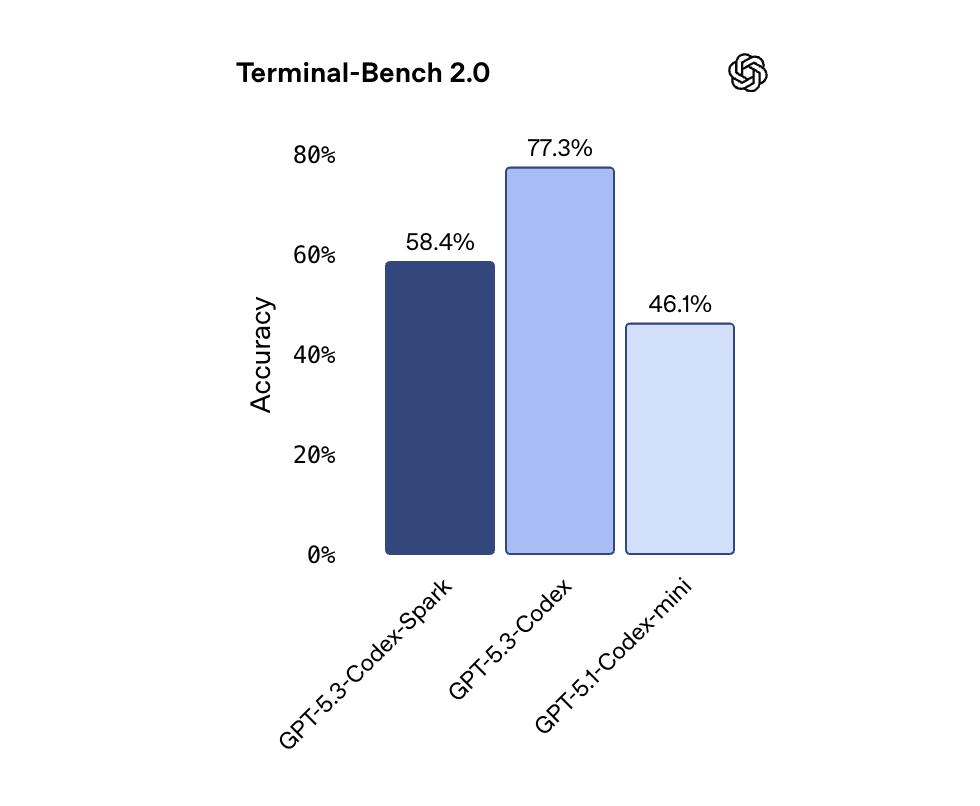

Compared to similar models, GPT-5.3-Codex-Spark demonstrates amazing speed advantages in code generation tasks while maintaining high code quality.

Application Scenarios

- Real-time code completion

- Intelligent code review

- Automated test generation

- Code refactoring suggestions

Significance

This marks OpenAI's official entry into the integrated software and hardware competition stage. No longer relying on NVIDIA's GPUs means lower costs, higher efficiency, and complete control over the supply chain.

For developers, this means AI programming assistants will become faster, cheaper, and more accessible.