Burning 100 Million Tokens a Day? Programmers' AI Bills Are Punishing the 'Lazy'

Target Audience: Developers currently using AI programming tools (such as Cursor, Windsurf, trae...), and technical managers who lack awareness of AI costs.

Core Viewpoint: Tokens are not just simple billing units; they are a form of "attention resource" and "computing power currency." Abusing Agent mode and neglecting context management essentially uses tactical diligence (letting AI mess around) to cover up strategic laziness (not thinking for oneself).

Your "AI Spending" Might Be Higher Than Your Salary

A few days ago, I checked my token bill. When I saw that number, I was a bit surprised: 10 million tokens. Note, this isn't monthly usage, it's one day's worth.

I thought mine was outrageous. Later, I posted a short video related to token calculation.

As a result, the comments showed me what "there's always someone better" really means.

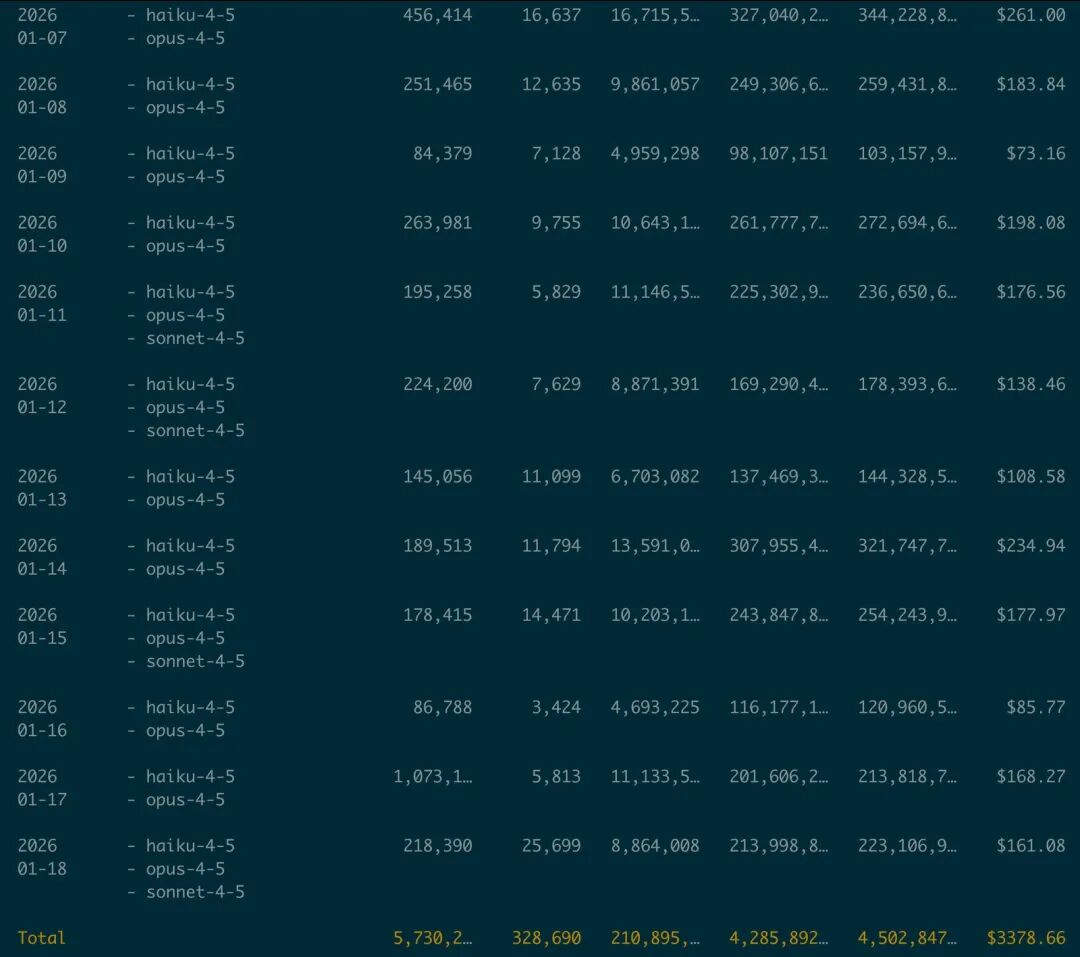

The image below is a screenshot of netizen "Old K's Daily" record of consuming 200 million tokens in a day:

At first, I thought it might be an isolated case, but when many netizens commented saying they consume 100 million a day, I understood this is a very common phenomenon.

What does 100 million tokens mean? If we estimate based on the common billing scale of "certain mainstream commercial models" (input/output billed separately, roughly estimated together at $10 / million tokens for scale), that's $1000 burned in a day. 7000 RMB burned in a day. Many junior programmers' monthly salaries might not even cover the AI "thinking" for this one day.

(Note: Prices vary greatly between different models/suppliers, and input and output unit prices are often different. The purpose here is not to calculate precisely to two decimal places, but to first establish a sense of "magnitude.")

If you want to recalculate yourself, generally just use this formula (ignoring special rules like caching/discounts):

cost ≈ (inputTokens / 1,000,000) × price_in + (outputTokens / 1,000,000) × price_out

This is so counterintuitive. We always think AI is cheap; OpenAI even keeps lowering prices. But why does token consumption explode exponentially in actual engineering?

Today, let's deeply dissect the logic behind this "Token Black Hole" and how we can stop the losses.

1. Why Do Tokens "Explode Exponentially"?

Many folks have no concept of the scale of tokens. They think: "Oh, it's just sending a few lines of code, right? How much could it be?"

1. Do the Math Clearly

Let's first establish a quantitative perception that's sufficient for engineering. To be blunt: Tokens are not word count, nor character count. They are "encoded fragments" after the model splits the text. Different models use different tokenizers, so we can only give a range, not a "universally applicable" constant.

Take the numbers below as an "estimation ruler" (the purpose is to judge magnitude, estimate costs, and make loss-stopping decisions):

- 1 Chinese character: Typically 1–2 tokens (high-frequency characters closer to 1, rare characters/combinations more likely 2–3)

- 1 English word: Typically around 1.2–1.5 tokens (rough estimation using 1.3 is fine)

- 1 line of code ≈ 10–50 tokens (including indentation, comments, type declarations)

- Concise business logic ≈ 12–20 tokens

- With type annotations, interface, JSDoc, 4-space indentation ≈ 20–35 tokens

- With lots of imports / decorators / comments ≈ 30–50+ tokens

- 1 source file (400–600 lines, modern TS/Java project) ≈ 4,000–24,000 tokens is common (median ≈ 12,000–18,000)

- 1 medium-sized project (100–200 source files, only counting

src/, excludingnode_modules// generated code)- "Reading through" the core source code often starts at a million tokens

- If you also stuff in tests, configs, scripts, dependency declarations, logs, tens of millions of tokens is not surprising

Modern frontend projects are all TypeScript, full of complex Interface definitions; or Java, with dozens of lines of Import statements. These "boilerplate codes" are actually token killers. For a medium-sized project with 100 files, just having the AI "read the code once" could easily consume 1 million tokens.

2. The "Snowball" Effect of Tokens

The scariest part of token consumption isn't a single conversation, but the context accumulation in multi-turn dialogues.

The LLM mechanism is stateless. To make the AI remember what you said last time, the system typically packages together "system prompts + conversation history + files/code snippets you referenced + tool call outputs (e.g., search results, error logs)" and sends it to the model. You think you only asked one question, but you're actually repeatedly paying for the entire context package.

- Round 1: Send 10k tokens, AI replies 1k.

- Round 2: Send (10k + 1k + new question), AI replies...

- Round 10: Your Context might have ballooned to 200k tokens.

At this point, even if you just ask "help me rename a variable," you're consuming the cost of 200k tokens. This is why you feel like you didn't do much, but the bill is skyrocketing.

Even worse: Agent mode will "actively read files." With one instruction like "help me optimize the user module," it might first scan the relevant directory, then chase dependencies, then configs, then tests... It's not being lazy; it's "doing its duty according to the default strategy," and the default strategy is often: read more, try more, iterate more.

2. Two Types of "Laziness" Are Destroying Your Engineering Ability

After reviewing the cases of those "100-million-token brothers" in the comments, I found the root cause of the token explosion isn't just the AI's consumption mechanism; it's also closely related to human laziness.

Below are two typical types of "mental laziness."

Laziness Type One: The Hands-Off Manager

Do you also have this mentality:

- "This old project is too messy, I can't be bothered to look at the logic, just throw it to the AI."

- "Cursor has an Agent mode, great, let it fix the bugs itself."

So, you throw the entire src folder to the Agent and give a vague command: "Help me optimize the user module." The Agent starts working:

- It reads 50 files (consumes 500k).

- It finds references to

utils, goes to read utility classes (consumes 200k). - It attempts a modification, gets an error, reads the error log (consumes 100k).

- It attempts a fix, gets another error...

It's frantically trying and failing, frantically consuming tokens. And you? You're scrolling on your phone, thinking how efficient you are. The truth is: The "pseudo-efficiency" you bought with money produced a pile of code you can't maintain later.

More professionally speaking, there are two layers of loss here:

- Cost Layer: Input tokens increase, iteration count increases, costs add up linearly.

- Engineering Layer: You lose context and decision-making power, ending up with an uncontrollable system where "it just runs" is good enough.

Laziness Type Two: The Everything-But-The-Kitchen-Sink Approach

When you encounter a bug, how do you throw it to the AI? Do you just Ctrl+A copy the entire error console, or directly @Codebase and let the AI find it itself?

This is called "throwing everything in." You're too lazy to locate the core problem, too lazy to filter for key code snippets. You dump 99%无效信息 (noise) and 1%有效信息 (signal) all at once to the AI.

AI is like an amplifier.

- If you give it clear logic (signal), it amplifies your wisdom, uses fewer tokens, and produces good results.

- If you give it chaos and vagueness, it amplifies your chaos, tokens skyrocket, and it produces garbage.

3. Solution: How to Use AI Efficiently and Reduce Token Consumption

To protect your wallet, and more importantly, to protect your engineering control, we must change how we collaborate with AI.

1. The Principle of Minimal Context

This is the first principle of AI programming. Always give the AI only the minimal set of code needed to solve the current problem.

In Cursor, make good use of these operators:

@File: Only reference relevant files, not the entire folder.Ctrl+Lto select code: Only send the 50 lines of code selected by the cursor to Chat, not the entire file.@Docs: For third-party libraries, reference the documentation instead of letting it guess.

This is the structured, reusable SOP I often use (follow this, and you'll see tokens visibly drop):

The meaning of this passage is: When collaborating with AI, pay attention to efficiency and precision. The specific approach is as follows:

- First, clarify the goal: Briefly and clearly tell the AI the current problem and the desired outcome; don't let it guess.

- Simplify problem reproduction: Use the simplest method to reproduce the problem; paste the minimum and most critical code; don't pile on irrelevant content.

- Provide minimal necessary information: Only give the relevant 1-3 files, key functions, and the first few lines of the error stack trace; no need for full information.

- Request modification points: Ask the AI to only tell you what to change and why; don't let it rewrite all the code extensively.

- Finally, you do the final check: Perform the most concise verification to ensure the changes don't affect other areas.

In short, use the least, most critical information to let the AI work, and retain the final control and judgment.

2. Also the Most Important: Think First, Then Prompt; Plan First, Then Act

Before hitting Enter, force yourself to pause for 10 seconds and ask three questions:

- What problem am I solving? (Define boundaries)

- Which core modules does this problem involve? (Filter Context)

- If I were to write it myself, how would I write it? (Provide思路)

You are the 1, AI is the following 0s. If the 1 isn't solid, no matter how many 0s follow, it's just meaningless consumption.

A Few Heartfelt Words

The story of "100 million tokens a day" might not happen to everyone. But the behavior of wasting tokens is something almost every programmer using AI for coding has experienced.

AI makes programming easier, but there is still a threshold. Only those who truly know how to use it will gain wings like a tiger.

Before, your bad code would only "disgust" your colleagues. Now, the laziness you indulge in directly turns into numbers on a bill, punishing yourself with soaring costs.

So, don't be a "hands-off manager." Be an AI architect who thinks deeply, expresses precisely, plans before acting. This is also our greatest irreplaceability in this era.