Everything is a File: The Design Philosophy from Unix to AI Agent

Everything is a File: The Design Philosophy from Unix to AI Agent

Original by Ethan Yecheng

Echoes Across Half a Century

Back in the early 1970s at Bell Labs, Ken Thompson and Dennis Ritchie, the fathers of Unix, first proposed a design principle so bold it bordered on obsession: Everything is a file.

More than fifty years later, AI Agent frameworks are exploding. Manus, Claude Code, OpenClaw... they come from different teams, different technology stacks, and different business goals, but they have all made the same choice: to use the file system as the cognitive skeleton of the Agent.

Manus gives the Agent a virtual machine, and the task products are written to disk as files. Claude Code directly reads and writes on the user's local file system, using a CLAUDE.md file to carry all instructions and context. OpenClaw and other open-source frameworks also organize task decomposition and intermediate states in a directory structure.

When engineers separated by half a century, facing completely different technical problems, independently converge on the same solution - this is not a coincidence, it is a resonance of design philosophy.

The Decision of Unix

To understand the weight of this, you must first go back to what Unix did.

The design of the Unix file system is widely recognized as one of the most elegant designs in the history of computer science. It solves an extremely complex problem: how to manage diverse hardware and data resources with a unified and simple interface.

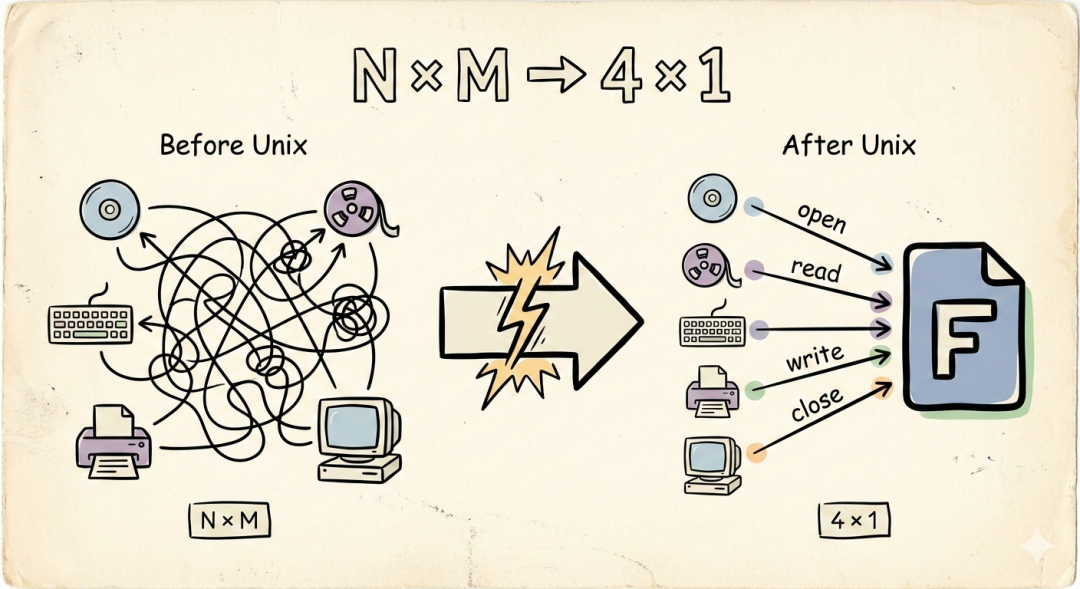

Before the 1970s, operating systems worked like this: to read a disk, you would call the disk interface; to read a tape, you would call the tape interface; to access a terminal, you would call the terminal interface. Each device had its own API, and each API had its own semantics. If you have N types of devices and M types of operations, the system complexity is N × M.

Thompson and Ritchie did something that seemed simple to the point of being foolish:

Turn everything into a file. Use the four verbs open, read, write, and close to operate everything.

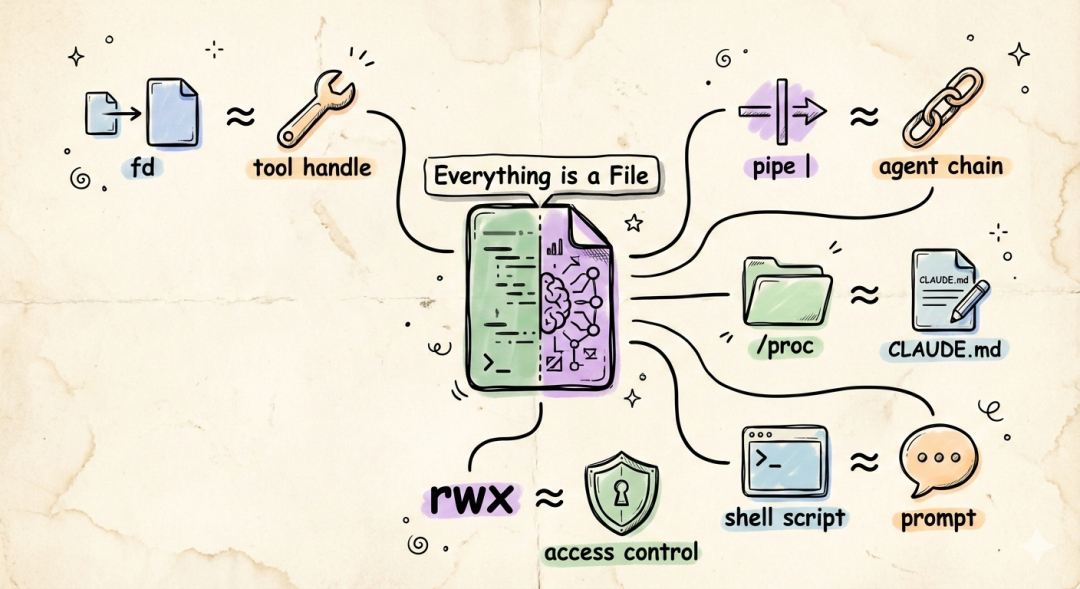

Its core meaning is that all resources in the operating system—documents, directories, hard drives, modems, keyboards, printers, and even network connections and process information—can be abstracted into a file stream (Stream of Bytes).

This means that you only need to learn one set of APIs—open(), read(), write(), close()—to operate all the resources of the computer.

From then on, the complexity collapsed from N × M to 4 × 1. Four verbs, one layer of abstraction.

The genius of this is not in the noun "file", but in a deeper insight:

You don't need to know what's behind the file descriptor. The interface is the contract.

An fd (file descriptor) is an opaque handle. You read() it, and a stream of bytes comes out. As for whether these bytes come from a hard drive sector, a network card buffer, or the standard output of another process—you don't care, and you shouldn't care.

This is the power of a unified interface: it makes ignorance an advantage.

The Same Question Faced by Agents

Now let's look back at the situation of AI Agents.

To complete complex tasks, an Agent faces a predicament strikingly similar to that of operating systems in the 1970s:

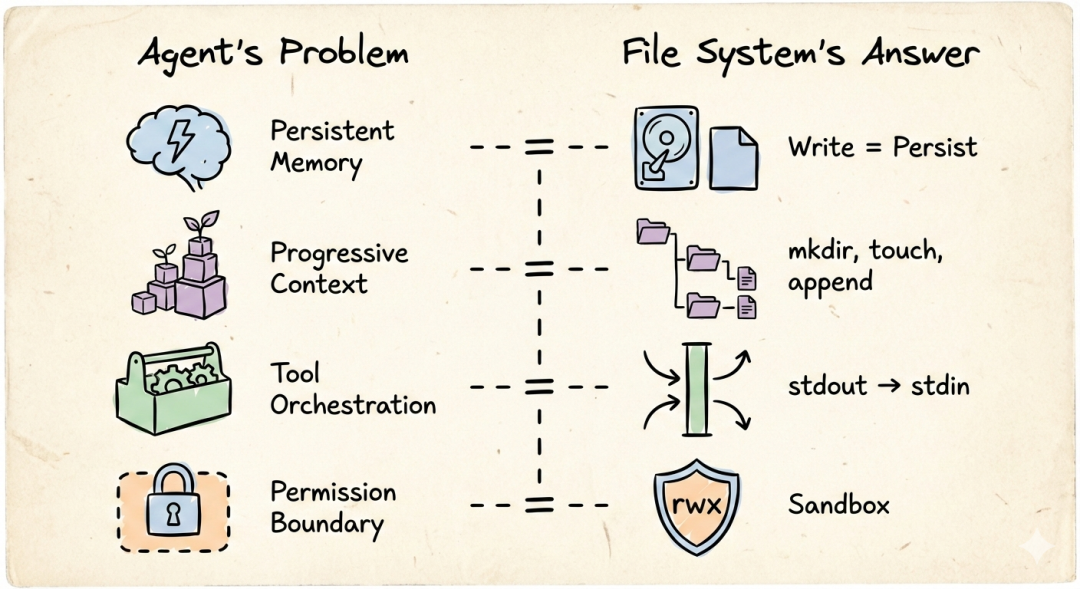

- Persistent Memory: The context window of LLMs is volatile, and the chain of thought disappears with the session. Just like memory being reclaimed after a process exits—you need a place to persist the intermediate state, otherwise every conversation starts from scratch.

- Incremental Context: Complex tasks cannot be completed in one step. Agents need to gradually accumulate context in multiple rounds of reasoning, just like Unix processes pass state between multiple executions by reading and writing files. The file system naturally provides this "write a little, read a little, write a little more" incremental working mode.

- Unified Scheduling of Tools and Skills: Agents need to call heterogeneous tools (Tools/Skills) such as search, code execution, and image generation, just like Unix needs to manage heterogeneous devices such as disks, networks, and printers. You need a unified abstraction layer, otherwise you'll have to write a new integration logic for each new tool you add.

- Permission Boundaries for Computer Use: When an Agent has the ability to operate a computer, "what it can touch and what it cannot touch" becomes a matter of life and death. Unix's file permission system (rwx) happens to provide a ready-made sandbox model—directories are boundaries, and permissions are contracts.

Four requirements. Sound familiar?

This is exactly the problem that operating systems faced in the 1970s.

Persistent memory—the file system naturally solves it, writing means persistence. Incremental context—the directory structure itself is built incrementally, mkdir, touch, append, the context grows with the file. Unified scheduling of tools—the essence of Unix pipes: the stdout of one process is the stdin of another, and the intermediate medium is the byte stream. The same is true for Agent's toolchain: the output file of the previous step is the input of the next step. Permission boundaries—the file system's rwx permissions and chroot sandbox naturally define the Agent's "circle of competence".

So when the designers of the Agent framework faced the question of "where to put the Agent's working state", the answer was almost predetermined: in the file system. Because there is no simpler solution that can satisfy these four constraints at the same time.

When a system needs to "manage the interaction of a large number of heterogeneous resources", you have two paths:

When a system needs to "manage the interaction of a large number of heterogeneous resources", you have two paths:

Route A: Design a dedicated interface for each resource. N resources × M operations = NM interfaces. Precise but explosive.

Route B: Find a thin enough abstraction layer to make all resources wear the same clothes. 4 operations × 1 layer of abstraction. Rough but composable.

Unix chose B. More than fifty years later, the Agent framework chose B again.

One Level Deeper: Files are the Externalization of Thought

But if we only stop at "the convergence of technical solutions", we will miss something more essential.

Think about how humans themselves handle complex tasks.

You receive a big project, and the first thing you do is not to start working, but to: create folders. Project root directory, subtask directory, reference material directory, output directory. You use the directory structure to break down chaotic tasks into manageable units. You use filenames to name each unit. You use file content to record the thinking process and intermediate products.

The file system is not just a storage solution. It is the original tool for humans to externalize thought.

This insight explains why the Agent framework converges on the file system: the "thinking" of LLM needs to be externalized—its context window is limited, and long-range reasoning must rely on external memory. And the file system happens to be the most universal "external memory" format invented by humans.

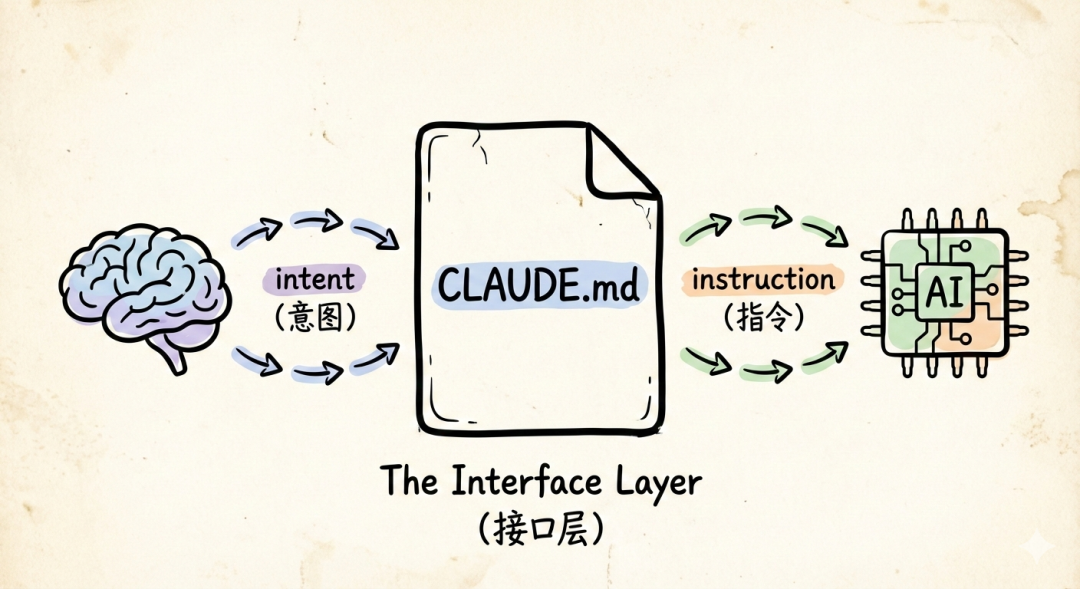

From this perspective, CLAUDE.md in Claude Code is not a configuration file. It is a kind of externalized cognitive contract—humans write intentions into files, and Agents read files as intentions. Files become the interface layer between the human mind and artificial intelligence.

This is strikingly consistent with the philosophy of Unix pipes:

This is strikingly consistent with the philosophy of Unix pipes:

Write programs to handle text streams, because that is a universal interface.Replacing "programs" with "agents" and "text streams" with "files", this statement will still hold true in 2026.

Back to First Principles

Great abstractions never go out of style; they simply find new instances in new domains.

"Unifying interfaces resolves complexity" is not a Unix invention; it is a timeless law of system design. Unix happened to implement it with the name "file". AI Agent happened to implement it again in the form of a "working directory".

The next generation of systems will also face the same choice again: design dedicated interfaces for each thing, or find a thin, general, composable abstraction?

If history has taught us anything, the answer has long been written next to /dev/null:

Keep it simple. Make it compose. Everything is a file.