No Parameter Tuning, Just Code! Jeff Clune's Team's New Work: Meta Agent Automatically Evolves Memory Modules

No Parameter Tuning, Just Code! Jeff Clune's Team's New Work: Meta Agent Automatically Evolves Memory Modules

On the road to Software 3.0, AI is starting to write its own Python code to evolve its brain.

In the deep waters of Agent development, memory has always been an unavoidable pain point.

Although the capabilities of foundation models are increasingly powerful, they are essentially stateless in the reasoning process, which limits the Agent's ability to continuously accumulate experience.

The current mainstream solutions for handling memory in the industry, whether RAG or sliding window summarization, are essentially still at the stage of manually designed heuristic rules.

This kind of manually crafted memory module is extremely fragile and difficult to migrate. The carefully tuned Prompt and retrieval logic for dialogue systems often fail directly when placed in long-range planning tasks (such as ALFWorld) or complex strategy games.

In response to this dilemma, Jeff Clune's team, a professor at UBC and former researcher at OpenAI, has provided a geeky solution.

Since we don't know what kind of memory structure is best, let the Agent write its own Python code to design it.

This is the newly released ALMA (Automated meta-Learning of Memory designs for Agentic systems).

From ADAS to ALMA: Code-Based Automated Design

ALMA is a continuation of the AI generation algorithm technology route recently promoted by the team.

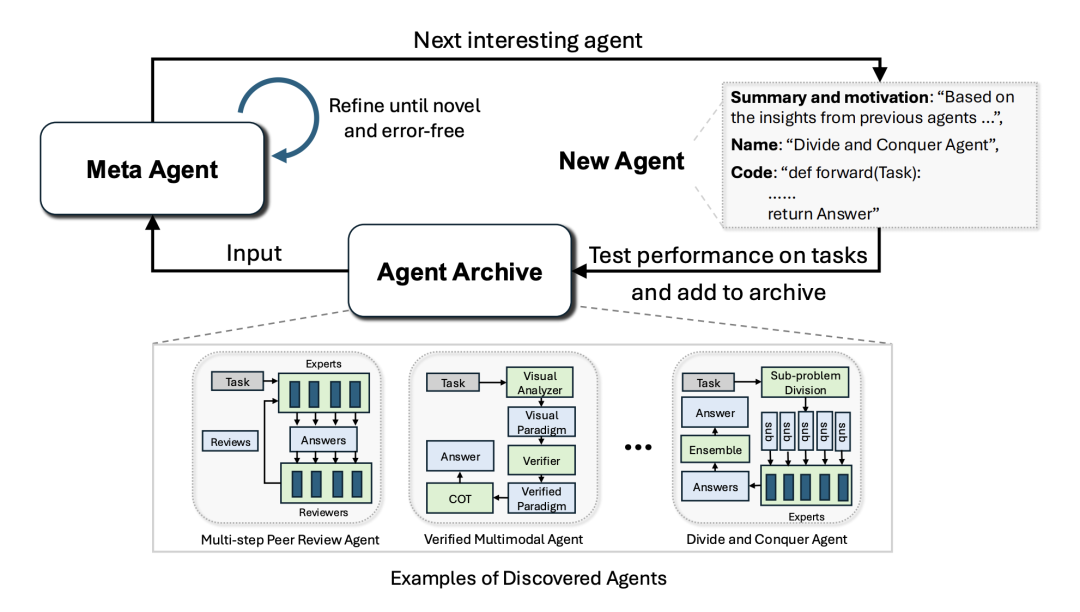

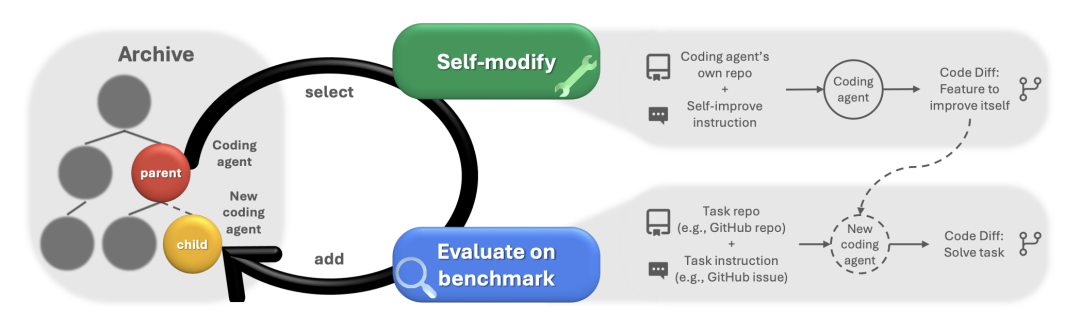

In ADAS (Automated Design of Agentic Systems), the team proved that code is a more efficient search space than neural network weights or Soft Prompts when designing Agent architectures. Code is Turing complete and has strong interpretability.

Later, in DGM (Darwin Gödel Machine), the team introduced the concept of open-ended exploration from evolutionary algorithms, maintaining a design archive to encourage the model to explore novel solutions.

ALMA inherits the code generation paradigm of ADAS and the evolutionary strategy of DGM, focusing the application scenario on the component of Agent systems that relies most on human experience - memory.

How ALMA Works

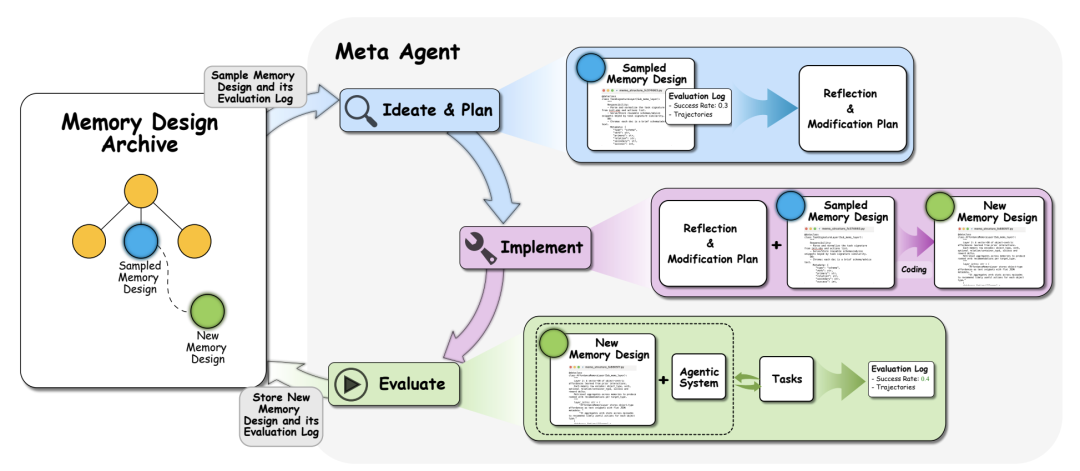

ALMA's operating mechanism is a standard meta-learning closed loop. The Meta Agent no longer directly handles tasks, but is responsible for programming. The process includes four stages:

- Ideation: Analyze the current memory design archive and conceive improvement plans based on historical performance.

- Planning: Translate the ideas into pseudocode logic.

- Implementation: Write executable Python code to define core functions.

- Evaluation: Deploy the generated code to a sandbox environment to perform tasks and provide feedback on performance metrics.

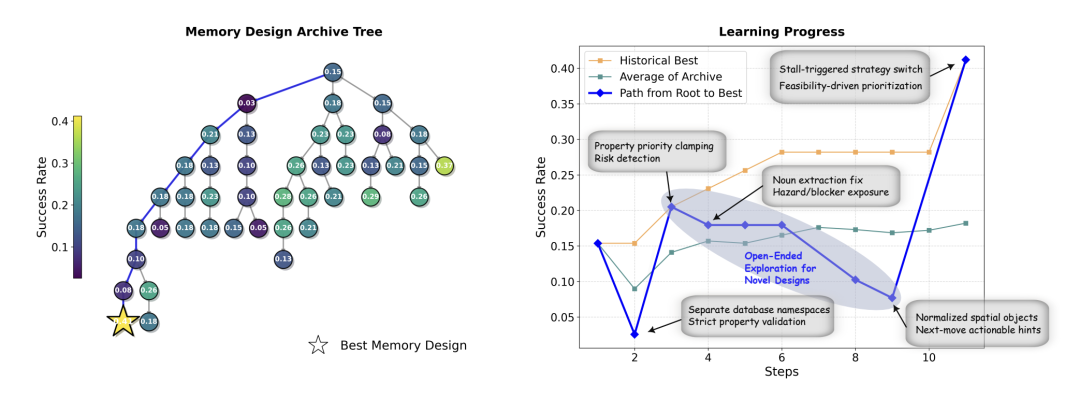

During the evolution process, ALMA generates a huge design tree. As the number of iterations increases, the generated memory code gradually evolves from simple storage logic to complex cognitive architectures.

Evolved Memory Structures

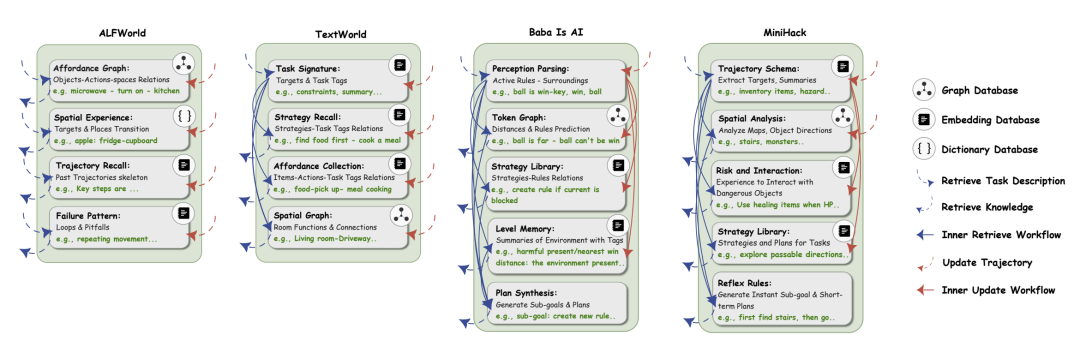

The memory designs generated by ALMA show great differences in different tasks:

- MiniHack (Dungeon Adventure): Designed a Risk and Interaction module to explicitly record operations that cause blood loss and the aggressiveness of monsters.

- Baba Is AI (Logic Puzzle): Designed a Strategy Library to record the combination of rules required to pass the level.

This shows that AI can identify task characteristics: survival games need to focus on risks, and puzzle games need to focus on rule abstraction.

Experimental Results

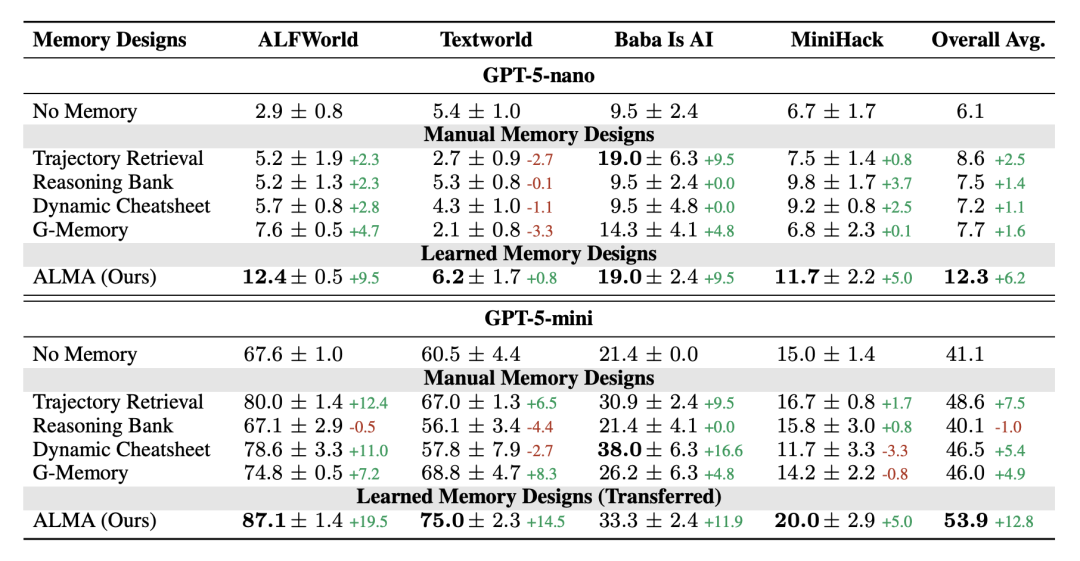

ALMA was compared with mainstream baselines in four environments: TextWorld, ALFWorld, MiniHack, and Baba Is AI.

On the GPT-5-mini model, ALMA's average success rate reached 53.9%, which is better than G-Memory (46.0%) and Trajectory Retrieval (48.6%).

In terms of cost efficiency, ALMA consumes an average of only 1,319 tokens, while Trajectory Retrieval consumes as many as 9,149 tokens, and G-Memory also reaches 6,055 tokens. ALMA achieves better performance at only about 1/7 to 1/5 of the cost.

Conclusion

ALMA demonstrates a possibility of transitioning from Software 2.0 (Neural Networks) to Software 3.0 (AI-Generating Algorithms).

In Agent development, the design of memory modules has long relied on the intuition of engineers. ALMA proves that through meta-learning and code generation, AI can automatically discover the optimal memory architecture according to the specific environment.

Resource Links

- Paper: https://arxiv.org/pdf/2602.07755

- Code: https://github.com/zksha/alma

- Project Homepage: https://yimingxiong.me/alma