OpenClaw + Claude Code/Codex: Building a Personal Development Agent Swarm

OpenClaw + Claude Code/Codex: Building a Personal Development Agent Swarm

Hello everyone, I am Lu Gong.

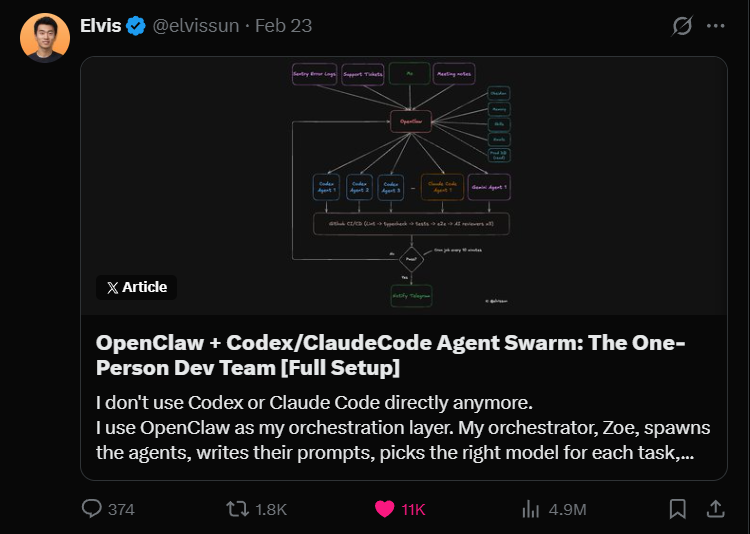

Recently, I came across a tweet on X that instantly caught my attention. An independent developer named Elvis mentioned that he no longer directly uses Claude Code and Codex; instead, he uses OpenClaw as an orchestration layer to manage a whole swarm of Claude Code and Codex agents with an AI orchestrator named Zoe.

The data from this tweet is impressive, with 4.9 million views, 11,000 likes, and 1,800 retweets.

Our account has been writing about Vibe Coding for over four months, and Claude Code has always been our main tool. I have also written some articles about multi-agent collaboration and VSCode multi-agent architecture.

Our account has been writing about Vibe Coding for over four months, and Claude Code has always been our main tool. I have also written some articles about multi-agent collaboration and VSCode multi-agent architecture.

However, seeing Elvis's approach, I can only call it expert-level. One person, relying on a single orchestration system, has an average of 50 code submissions per day, with a peak of 94 submissions in one day, while also taking three client calls without ever opening the editor.

Isn't this like one person acting as an entire development team?

Today's article will break down how he achieved this.

OpenClaw is Familiar to Everyone

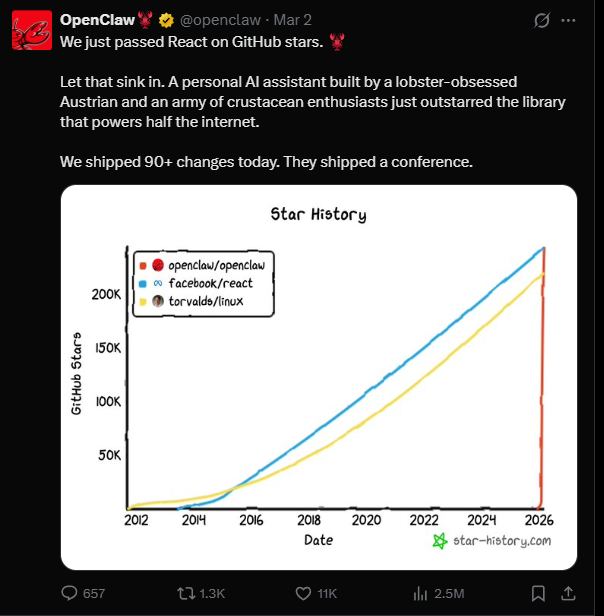

This little crayfish has been popular since before the Spring Festival. Simply put, it is an open-source AI agent framework, which has now surpassed 240,000 stars on GitHub and recently overtook React to become the fastest-growing open-source project in GitHub's history.

The founder, Peter Steinberger, is an Austrian developer who previously founded PSPDFKit (a B2B PDF framework company) and secured 100 million euros in investment from Insight Partners in 2021. In February of this year, Peter announced his joining of OpenAI, and the OpenClaw project was handed over to an open-source foundation for operation.

The founder, Peter Steinberger, is an Austrian developer who previously founded PSPDFKit (a B2B PDF framework company) and secured 100 million euros in investment from Insight Partners in 2021. In February of this year, Peter announced his joining of OpenAI, and the OpenClaw project was handed over to an open-source foundation for operation.

OpenClaw is not positioned as a chatbot; it is an AI agent runtime that runs on your local device. It has four core components: Gateway (connecting over 50 messaging platforms), Agent (inference engine), Skills (over 5,400 plugins), and Memory (memory system).

However, Elvis's use of OpenClaw is quite special. He directly treats it as an orchestration layer specifically for managing coding agents like Claude Code and Codex, rather than using it as a general assistant.

This approach is indeed quite unique.

Why Do We Need an Orchestration Layer?

Elvis mentioned a crucial point in his tweet: the context window is a zero-sum game.

If you fill it with code, there is no space for business context. If you fill it with client history and meeting notes, there is no space for the codebase. No matter how powerful a single AI is, it cannot simultaneously hold two completely different types of information.

So he split the system into two layers.

The upper layer is OpenClaw's orchestrator Zoe, who manages all business context, including client data, meeting notes, historical decisions, which solutions have been tried, and which have failed. All this information is stored in Elvis's Obsidian note library, which Zoe can directly access.

The lower layer consists of coding agents like Claude Code and Codex, which only focus on code and writing code. When each agent starts, Zoe writes a precise prompt based on the business context, telling it what to do, what the background is, and what the client wants.

In simple terms: the orchestrator is responsible for understanding the requirements, while the coding agents are responsible for executing the tasks. Each does what it is best at.

This architecture is similar to Stripe's recently revealed internal system, Minions. Stripe's Minions also features a design of parallel coding agents with a centralized orchestration layer, capable of merging over 1,000 completely AI-written pull requests each week. Elvis mentioned that he inadvertently built a similar architecture, just running on his own Mac mini.

Real Case Workflow

Elvis used a real case in his tweet to explain his complete workflow, and I will briefly outline the core steps.He received a call from a client who wanted to reuse existing configurations within the team. After the call, he discussed this requirement with Zoe. Since all meeting notes are automatically synced to Obsidian, Zoe already knew what the client had said and did not need Elvis to explain further. They together defined the scope of the functionality, and the final plan was to create a template system.

Then Zoe automatically did three things: recharged the client’s unlocking service (she has admin API permissions), pulled the client's existing configurations from the production database (read-only permissions, the coding Agent will never have this permission), and then generated a Codex Agent with a detailed prompt containing the complete business context.

Each Agent has its own independent worktree (isolated branch) and tmux session. The startup command is roughly like this:

# Create worktree + spawn agent git worktree add ../feat-custom-templates -b feat/custom-templates origin/main cd ../feat-custom-templates && pnpm install tmux new-session -d -s "codex-templates" \ -c "/Users/elvis/Documents/GitHub/medialyst-worktrees/feat-custom-templates" \ "$HOME/.codex-agent/run-agent.sh templates gpt-5.3-codex high After the Agent starts running, there is a scheduled task that checks every 10 minutes. But it does not directly ask the Agent (that would consume too many tokens); instead, it runs a deterministic Shell script to check whether the tmux session is still alive, whether a PR has been created, and whether the CI has passed.

If the CI fails, it automatically restarts the Agent, retrying up to 3 times. Notifications are only sent when human intervention is needed.

After the Agent completes its tasks, it automatically creates a PR. However, just creating a PR is not enough; Elvis defined a set of completion standards: PR creation, branch synchronization to main (no merge conflicts), all CI checks passed, code reviews from all three AI models passed, and if there are UI changes, screenshots must be attached.

Three AI Models for Code Review

Having three AI models for code review seems very stable. Discussing his evaluation of these three models is quite interesting.

Codex Reviewer, he rated the highest, saying its review is very thorough regarding edge cases and logical errors, with a low false positive rate.

Gemini Code Assist Reviewer, which is free, he said is very practical, able to identify security vulnerabilities and scalability issues overlooked by other models, and can provide specific remediation plans.

Claude Code Reviewer, his exact words were "basically useless," saying it is overly cautious, filled with suggestions like "consider adding..." most of which are over-engineered. Unless marked as critical issues, he skips them directly.

I was a bit surprised when I read this. As a heavy user of Claude Code, I have indeed encountered situations where it was too conservative during code reviews, but saying it is basically useless seems a bit extreme. However, this also indirectly indicates that cross-reviewing with multiple models is indeed valuable, as the biases of different models complement each other.

Only after all three reviews pass does Elvis receive a Telegram notification. At this point, he mainly looks at the screenshots to confirm whether the UI changes are correct; he merges many PRs without looking at the code. He said his manual review only takes 5 to 10 minutes.

Zoe's Proactivity

Zoe is not just an executor. More interesting than the workflow itself is Zoe's proactivity.

Elvis said Zoe does not wait for tasks to be assigned; she actively seeks work. In the morning, she scans Sentry's error logs, finds 4 new errors, and automatically generates 4 Agents to fix them. After meetings, she scans the meeting notes, marks 3 feature requests mentioned by the client, and then automatically starts 3 Codex Agents. In the evening, she scans the Git logs and starts Claude Code to update the changelog and client documentation.

When Elvis comes back from a walk, there is a message waiting for him on Telegram: 7 PRs are ready, 3 new features, and 4 bug fixes. Isn't this exactly the effect I have always hoped to create for an OPC one-person company development team?Moreover, when an Agent fails, Zoe's handling is much more advanced than a simple retry. It analyzes the failure reasons in conjunction with the business context. Did the Agent context explode? It will narrow the scope, allowing the Agent to focus only on three files. Did the Agent go off track? It will correct it, informing the Agent that the client wants X, not Y, and providing the exact words from the meeting.

As time goes on, Zoe will also accumulate experience, remembering which prompt structures work well for which types of tasks, enabling it to write more precise prompts next time.

This idea is essentially an upgraded version of the Ralph Loop. The core logic of the Ralph Loop is a cycle of pulling context, generating output, evaluating results, and saving experiences, but most implementations have a fixed prompt for each cycle. Elvis's system is different; each time Zoe retries, it dynamically adjusts the prompt based on the failure reason, and it is supported by complete business context.

Costs and Hardware

In terms of costs, Elvis publicly states that Claude costs about $100 per month, and Codex costs about $90 per month. He also mentioned that you can start testing the waters with as little as $20.

This cost is, of course, ridiculously cheap compared to hiring a developer. However, considering that you still need to make product decisions, communicate with clients, and conduct code reviews, it acts more like an efficiency amplifier, helping you save on the most repetitive tasks of coding and testing.

Regarding hardware, Elvis mentioned that his biggest bottleneck currently is RAM. Each Agent requires an independent worktree, each worktree has its own node_modules, and each Agent needs to run builds, type checks, and tests. Running 5 Agents simultaneously means 5 parallel TypeScript compilers, 5 test runners, and 5 sets of dependencies.

His Mac mini with 16GB of memory can run a maximum of 4 to 5 Agents at the same time; any more will start swapping memory. Therefore, he bought a Mac Studio M4 Max with 128GB of memory (costing $3500) to handle more concurrent Agents.

Summary and Real-World Issues

Honestly, Elvis's system has had quite an impact on me. I previously regarded OpenClaw as a toy, relying on independent Claude Code for productivity. Occasionally using worktrees for parallel tasks, but nowhere near this level of systematic orchestration. After reading his tweets, I feel that the ceiling for AI programming has been raised again.

I am currently preparing to use OpenClaw to create a fully automated one-person development team based on his ideas. Therefore, we will be publishing multiple practical articles on OpenClaw soon.

There are a few real-world issues that I need to remind everyone about.

The premise of this system is that you must have a clear product, defined customer needs, and a complete CI/CD pipeline. Elvis is working on a real B2B SaaS product with customers, revenue, and a production environment. If you are still in the demo or learning phase, the ROI of this architecture may not be very cost-effective.

Additionally, the current security issues with OpenClaw must be noted. According to public information, several high-risk CVEs have been disclosed, and 341 malicious community plugins have been found to exhibit data theft behavior. When deploying OpenClaw, isolation and permission control must be done well. This is also the reason I have not deployed OpenClaw on my main local machine.

One more thing, Elvis rated the code review of Claude Code relatively low in his tweets, but recently Claude Code has just launched the Agent Teams feature (officially built-in multi-Agent collaboration), and Anthropic is also pushing in the direction of orchestration.

However, aside from these details, Elvis's architecture idea of having an orchestration layer plus an execution layer is indeed worth paying attention to. The zero-sum game of context windows is a real constraint, and using a layered architecture to solve this problem, allowing different AIs to perform their respective roles, is a direction I personally believe is correct.

Friends interested in this topic can directly check out Elvis's original tweet, which is very information-dense:...

Friends interested in this topic can directly check out Elvis's original tweet, which is very information-dense:...