PageIndex Deep Dive: Vectorless Reasoning-Based RAG, Enabling AI to Read Documents Like Human Experts

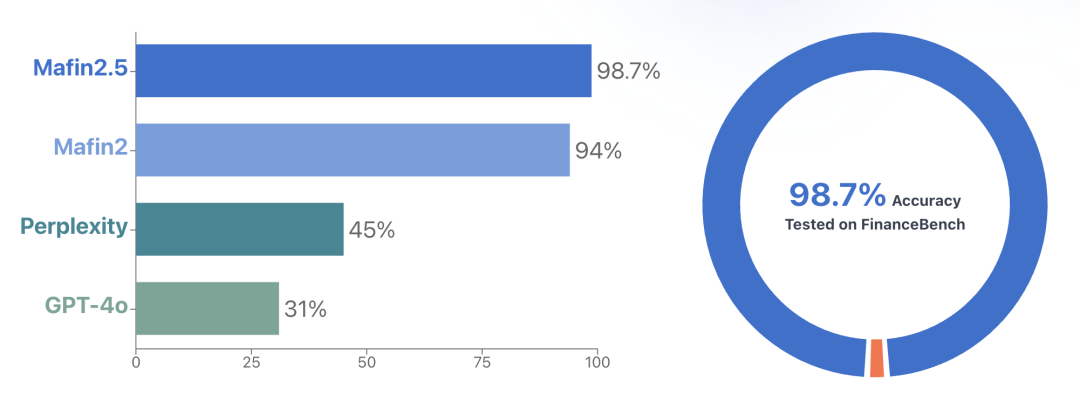

PageIndex is an open-source, vectorless, reasoning-based RAG framework (GitHub 14.8k+ stars) from the Vectify AI team. It transforms long documents into hierarchical tree indexes and uses LLMs for reasoning-based retrieval on the tree, achieving 98.7% accuracy on the FinanceBench financial document question answering benchmark.

1. Background: Five Pain Points of Traditional RAG

RAG has become the de facto standard for large model applications. The mainstream solution involves dividing documents into fixed-length chunks during the preprocessing stage, converting them into vectors using an embedding model, and storing them in a vector database. During querying, the user's question undergoes the same embedding process, and the Top-K results are recalled through vector similarity search, which are then concatenated as the input context for the LLM.

This process works effectively for short texts and general scenarios, but it exposes five fundamental problems in specialized long document (financial reports, laws and regulations, technical manuals, etc.) scenarios:

1) Similarity ≠ Relevance. Vector retrieval assumes that "the most semantically similar text block = the most relevant source of answers," but in professional documents, many paragraphs share approximate semantics but differ greatly in key details.

2) Hard Chunking Destroys Contextual Integrity. Dividing documents by fixed windows of 512 or 1024 tokens truncates sentences, paragraphs, and even entire logical segments, leading to the loss of crucial context.

3) Misalignment Between Query Intent and Knowledge Space. User queries express "intent" rather than "content," and query embeddings and document embeddings exist in different semantic spaces.

4) Inability to Handle In-Document References. Professional documents commonly contain references such as "see Appendix G" or "refer to Table 5.3." There is no semantic similarity between these references and the referenced content, making it impossible for vector retrieval to match them.

5) Independent Queries, Inability to Utilize Dialogue History. Each retrieval treats the query as an independent request, making it impossible to combine the context of previous dialogues for progressive retrieval.

2. PageIndex Overall Architecture

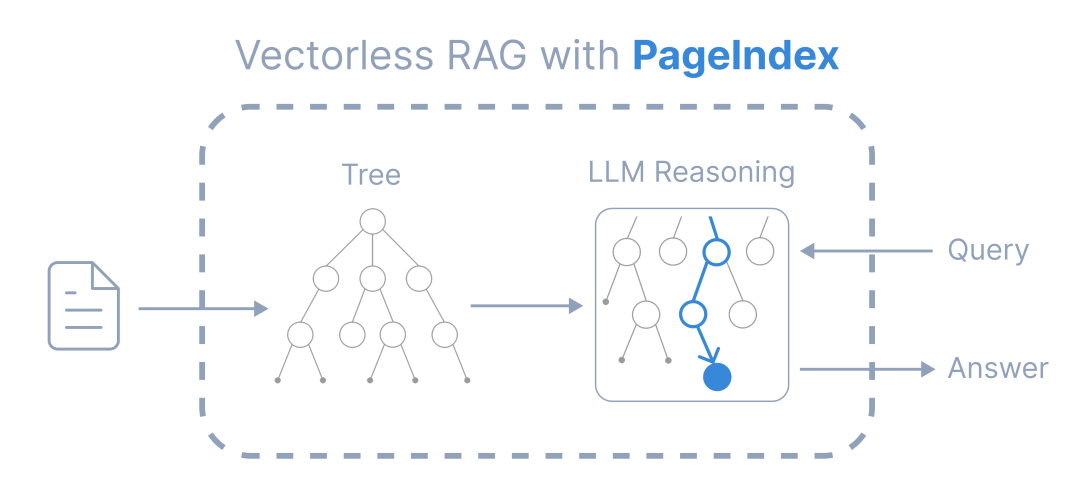

PageIndex is a Vectorless, Reasoning-based RAG framework. Its core idea is: Instead of having the model perform approximate matching in vector space, it is better to have the model reason on the structured representation of the document—deciding "where to look" rather than just "what looks similar."

PageIndex simulates how human experts read long documents: first, browse the table of contents, determine relevant chapters based on the question, and delve deeper until the target content is found. This process is achieved in two steps:

PageIndex simulates how human experts read long documents: first, browse the table of contents, determine relevant chapters based on the question, and delve deeper until the target content is found. This process is achieved in two steps:

- Building a Tree Structure Index: Convert PDF/Markdown documents into a hierarchical JSON tree, similar to a "table of contents optimized for LLMs"

- Reasoning-Based Tree Search: The LLM navigates the tree based on the question, locates relevant nodes, extracts content, and generates answers

3. Core Module Breakdown

3.1 PDF Processing Pipeline

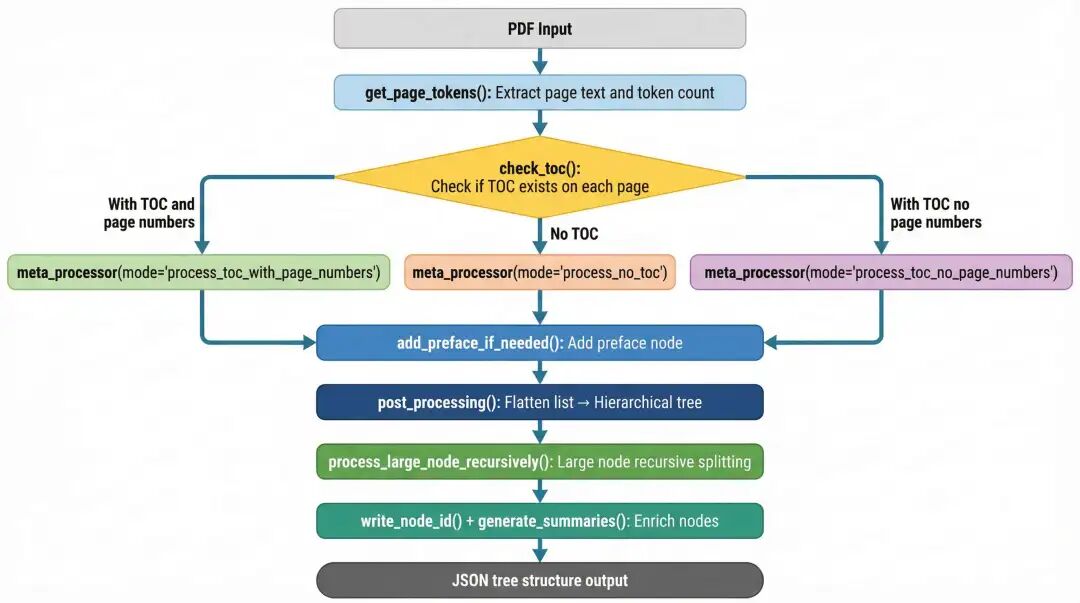

PageIndex's PDF processing pipeline is orchestrated by the tree_parser() function, and the core process includes: table of contents detection (three mode branches), supplementing the preface, converting flat lists to hierarchical trees, recursively subdividing large nodes, enriching nodes, and outputting the JSON tree structure.

Three Processing Modes:

- process_toc_with_page_numbers (with table of contents + with page numbers): Use LLM to convert the original table of contents into structured JSON, mapping logical page numbers to physical page numbers

- process_no_toc (no table of contents): The LLM directly infers the hierarchical structure from the main text content

- process_toc_no_page_numbers (with table of contents but no page numbers): Extract the structure and then infer and supplement the physical page numbers

3.2 Tree Structure Data Model

Each node in the tree contains fields such as: title, node_id, start_index, end_index, summary, prefix_summary, text, nodes (array of child nodes), etc.

3.3 Reasoning-Based Retrieval Mechanism

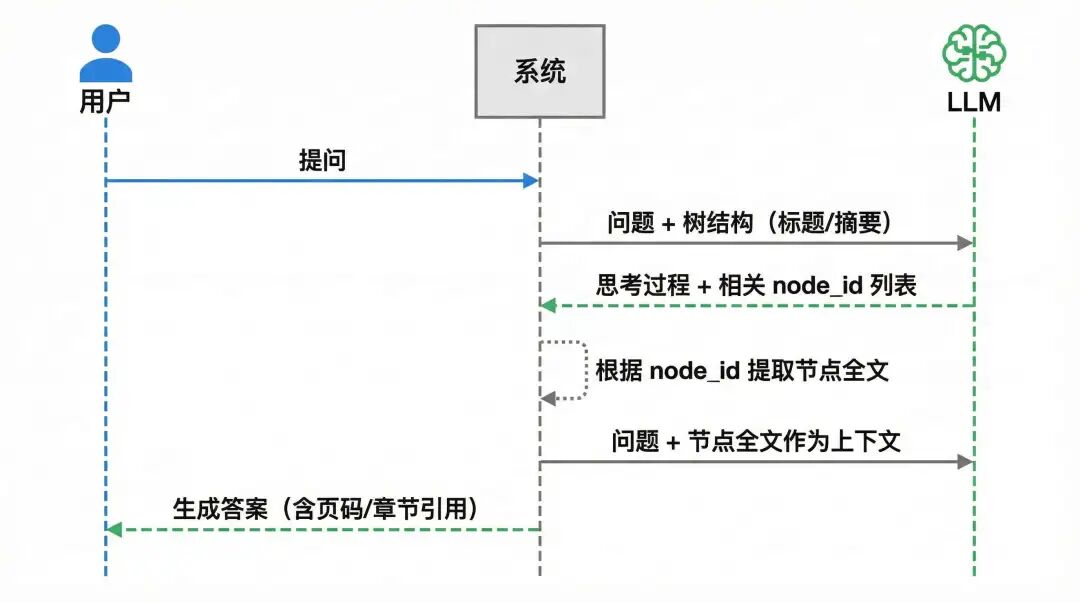

The retrieval stage does not rely on any vector calculations. The LLM receives the user's question and the document tree structure, performs reasoning based on the node titles and summaries, and outputs its "thought process" and a list of relevant node_ids. The system then extracts the complete text of the corresponding nodes from the node_map based on the node_ids and concatenates them as context to the LLM to generate the final answer.

4. Core Design Highlights

- Vectorless Architecture: No need for embedding models and vector databases, reducing infrastructure costs and simplifying deployment

- Preserves Natural Document Structure: Organizes content according to the document's inherent chapters/sections/subsections, avoiding cross-chunk context loss

- Explainability of Retrieval: Each retrieval returns a complete reasoning chain, which has a clear advantage in scenarios with high compliance requirements

5. Evaluation Results

Mafin 2.5 is a financial document question answering system based on PageIndex. Its performance on FinanceBench (financial document QA benchmark) reaches 98.7% accuracy, far exceeding Perplexity (45%) and GPT-4o (31%).

6. Applicable Scenarios

Suitable for: Long documents with clear hierarchical structures (financial reports, regulations, textbooks, manuals), ranging from dozens to hundreds of pages

Not suitable for: Documents with unstructured content, un-OCR'd scans, documents primarily consisting of tables/charts, scenarios requiring millisecond-level real-time response

7. Summary

PageIndex's core contribution lies in proposing a practical vectorless RAG paradigm: using the natural structure of documents to build a tree index and using LLM reasoning to replace vector similarity search. This solution performs excellently in professional long document scenarios with clear hierarchical structures, and its explainability and auditability are also significantly better than traditional solutions.