Qwen 3.5 Released: 397B Parameter Open-Weight Model, 60% Cost Reduction

Alibaba has just released Qwen 3.5-397B-A17B. This is the first open-weight model in the Qwen 3.5 series.

Core Data

- Total Parameters: 397B

- Active Parameters: 17B per pass (Sparse MoE)

- Throughput: 8.6x-19x improvement over Qwen 3-Max

- Cost: 60% reduction compared to Qwen 3

- Language Support: 201 languages (expanded from 119)

This is not simply stacking parameters. This is a redefinition of efficiency.

Architectural Innovation

Qwen 3.5 uses a hybrid architecture:

- Gated Delta Networks + Sparse MoE

- Hybrid Linear Attention: Most layers use linear attention, with full attention every 4 layers

- Native Multimodal: Not added later, but trained from scratch

There is a technical analysis on X:

"Qwen3.5-397B-A17B: Hybrid linear attention + sparse MoE with large-scale RL environment scaling." — @Alibaba_Qwen

The significance of this architecture is: achieving performance close to a 400B model with 17B active parameters. Inference costs are significantly reduced.

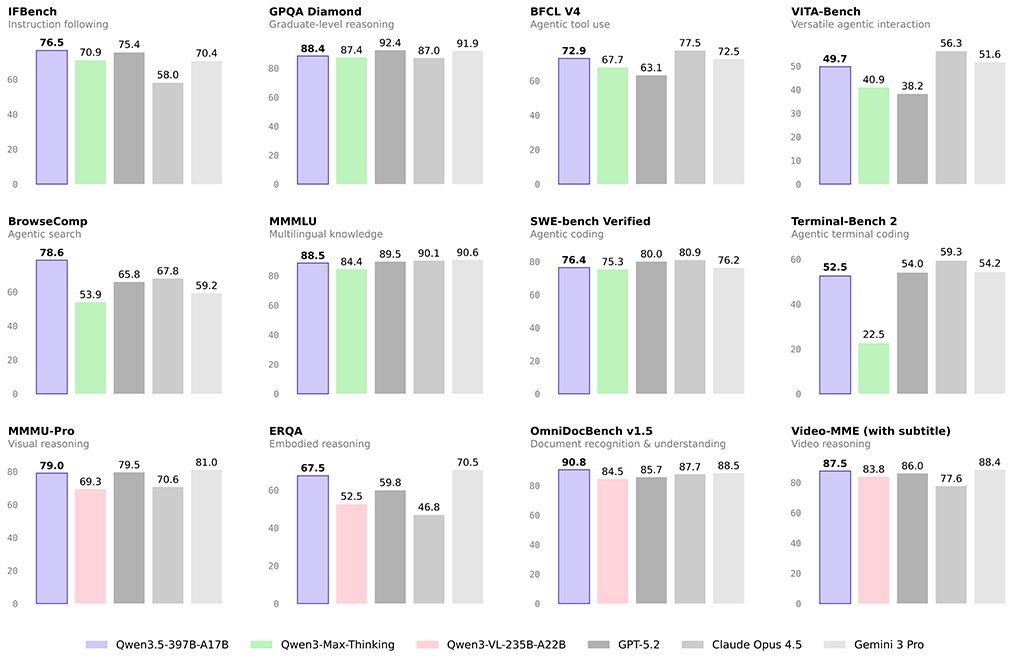

Performance Claims

Alibaba claims that Qwen 3.5 beats:

- GPT-5.2

- Claude Opus 4.5

- Gemini 3 Pro

Independent testers on X are starting to verify:

"Qwen 3.5-397B dropped today... and the benchmarks are insane. Trading blows with Claude Opus 4.5 and GPT-5.2 across the board." — @antonpme

But the most critical thing is not the benchmark, but agent capabilities:

"The agentic capabilities are the real story here. Qwen 3.5 can interact with GUIs, not just understand them. That's the unlock for workflows that touch existing software." — @thebuildrweekly

Agent Era

The positioning of Qwen 3.5 is very clear: designed for the agent era.

- Can analyze 2-hour videos

- Can independently execute cross-application tasks

- Can understand and interact with GUIs

"Qwen 3.5 can independently take actions across apps." — @thebuildrweekly

This means it is not a "chatbot", but a "task executor".

Competitive Landscape

Someone on X summarized this week's AI releases:

"This might be the single biggest week in AI history: DeepSeek V4, Gemini 3.1 Pro, GPT-5.3, Qwen 3.5, Claude Sonnet 5." — @HeyAbhishek

The rhythm of Chinese model manufacturers is very clear:

- DeepSeek V4

- Qwen 3.5

- GLM 5

- MiniMax 2.5

There is a new model every week, each claiming to beat GPT. This is not marketing, this is an escalation of the cost war.

Cost Structure

The token price of Qwen 3.5 is only 1/18 of Gemini 3 Pro.

"Qwen 3.5 with performance comparable to Gemini 3, and a token price of only 1/18 of the latter." — @dyz_ob

When performance is close and the cost is only 5%, where is the moat of closed-source models?

Bottom Line

Qwen 3.5 is not "China's GPT". It is a disruptor of cost structure:

- 397B parameters, but only 17B are activated

- Open weights, can be deployed locally

- Agent capabilities, not just dialogue

- Cost is only 5% of competitors

There is an interesting prediction on X:

"Qwen 3.5 Q4 version only needs 225G, which is very practical" — @janxin

225GB of video memory, can be run on a single machine. This means that small and medium-sized developers can access models close to GPT-5 level for the first time.

The real question is not whether Qwen 3.5 can beat GPT-5.3, but: how do AI companies make money when the cost of top models drops to near zero?