Recently, I saw 2 good papers on LLM+KG for complex logical reasoning

Recently, I saw 2 good papers on LLM+KG for complex logical reasoning

-

LARK https://arxiv.org/abs/2305.01157 Complex Logical Reasoning over Knowledge Graphs using Large Language Models

-

ROG https://arxiv.org/abs/2512.19092 A Large Language Model Based Method for Complex Logical Reasoning over Knowledge Graphs

I. The Dilemma of Knowledge Graph Reasoning

Knowledge graphs (KG), as the core carrier of structured knowledge, face three major pain points:

- Complexity: Combinatorial explosion of operations such as multi-hop reasoning, intersection and union, and negation

- Incompleteness: Real-world KGs generally have noise and missing information

- Generalization: Traditional embedding methods are difficult to transfer across datasets

Traditional solutions (such as Query2Box, BetaE) rely on geometric embedding spaces, modeling logical operations as vector/box operations, but suffer from severe information loss during deep reasoning. How can we make the model understand both the logical structure and reason flexibly? The rise of large language models (LLM) provides a new idea.

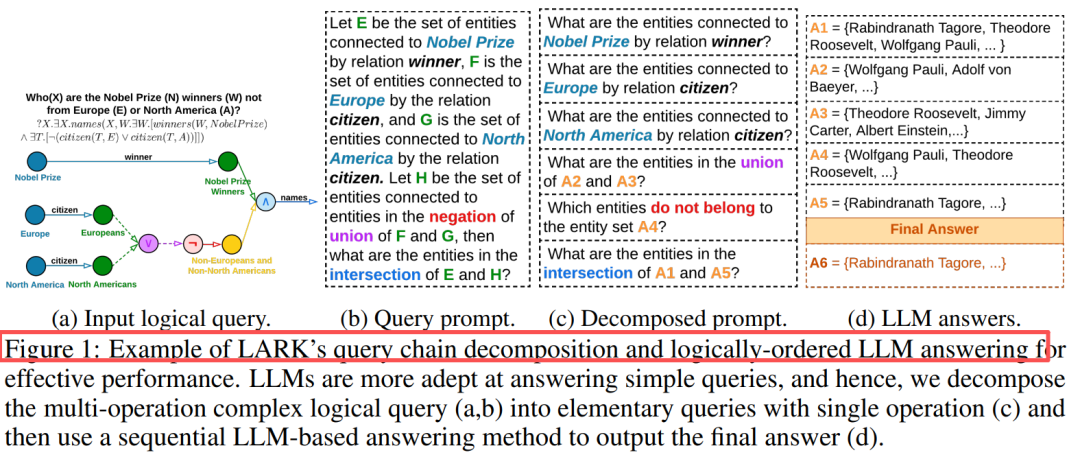

Figure 1: LARK's query chain decomposition and LLM reasoning process. Decompose complex multi-operation queries into single-operation subqueries and solve them step by step.

Figure 1: LARK's query chain decomposition and LLM reasoning process. Decompose complex multi-operation queries into single-operation subqueries and solve them step by step.

II. Solution: Inheritance and Evolution of Two Generations of Methods

LARK (2023) —— Pioneering Work

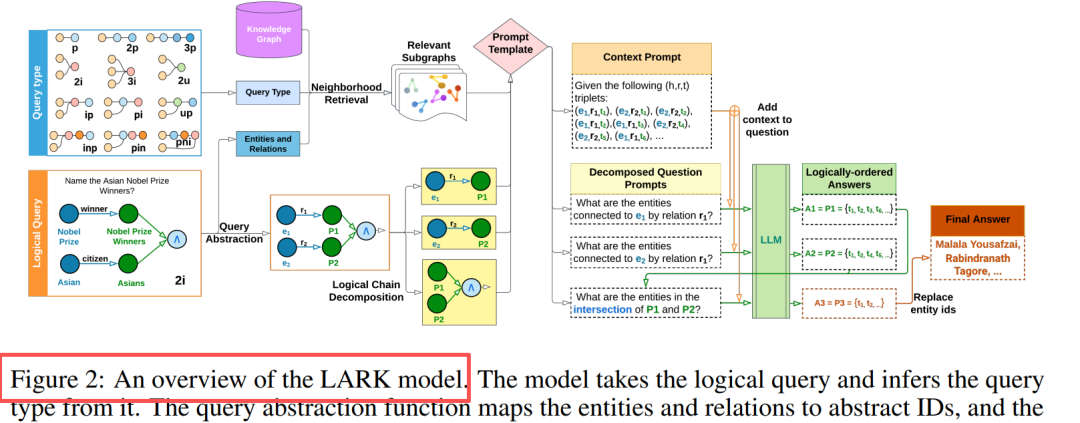

Figure 2: Decomposition strategy for 14 query types. 3p is split into 3 projections, and 3i is split into 3 projections + 1 intersection.

Core Innovation: Query Abstraction + Logical Chain Decomposition

Core Innovation: Query Abstraction + Logical Chain Decomposition

Component Design Query Abstraction: Replace entities/relationships with IDs to eliminate hallucinations and improve generalization Neighborhood Retrieval: k-hop depth-first traversal (k=3) to extract relevant subgraphs Chain Decomposition: Multi-operation query → Sequence of single-operation subqueries Sequential Reasoning: Cache intermediate results and logically replace placeholders in order Key Insight: LLMs are good at simple queries, and performance improves by 20%-33% after complex queries are decomposed.

ROG (2025) —— Advanced Version

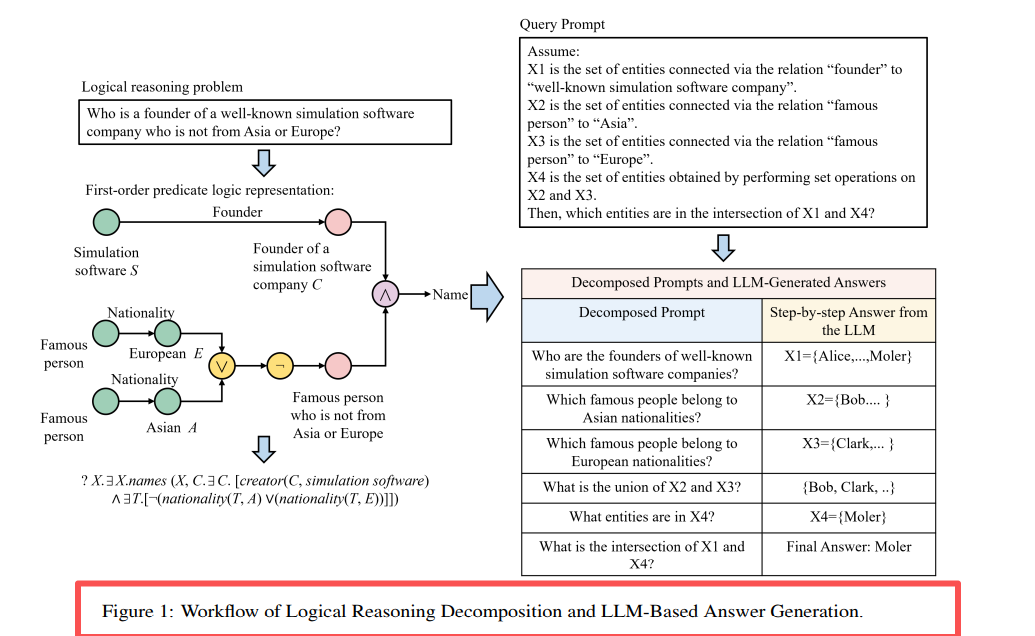

Inherits the LARK framework and adds an Agent consensus mechanism:

Inherits the LARK framework and adds an Agent consensus mechanism:

ROG = LARK core + Multi-Agent Collaboration + Chain-of-Thought Enhancement

Explanation of Improvements

Agent Design: Agent = Knowledge Base + LLM, multi-Agent consensus decision

CoT Enhancement: More explicit chain-of-thought prompt templates

Domestic Adaptation: Based on ChatGLM+Neo4j, for vertical fields such as electric power

ROG's data flow model

ROG's data flow model

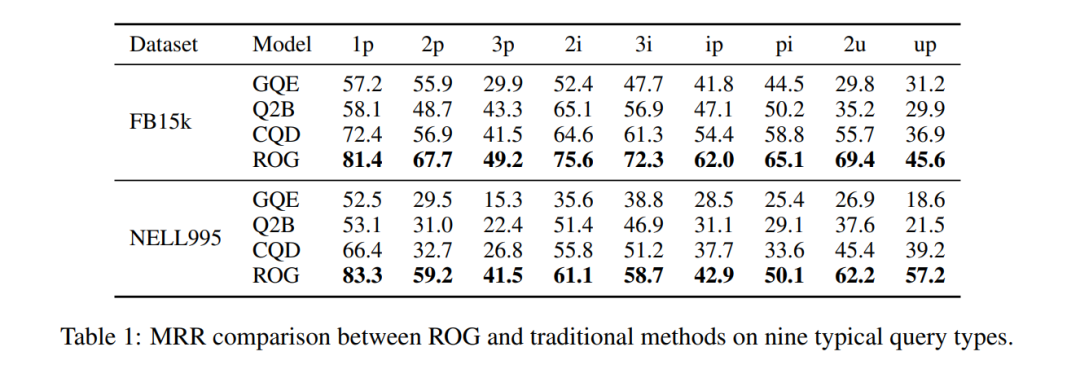

Performance leap: On FB15k, the MRR of ip query (projection after intersection) increased from 29.3→62.0, an increase of 111%!

Table 1: FB15k dataset MRR comparison. ROG is fully leading, and the improvement of composite queries is the most significant.

Table 1: FB15k dataset MRR comparison. ROG is fully leading, and the improvement of composite queries is the most significant.

III. Paradigm Establishment and Future Directions

The two generations of papers jointly verified a paradigm:

"Retrieval Augmentation + Query Decomposition + LLM Reasoning" is an effective path for KG complex logical reasoning.

Key trends:

- Abstraction is crucial —— Strip away semantic noise and focus on logical structure

- Decomposition strategy determines the upper limit —— Chain decomposition is more reliable than end-to-end

- Model capabilities continue to be released —— From Llama2-7B to ChatGLM, the progress of the base model brings significant gains

Although ROG's Agent mechanism enhances interpretability, its core innovation lies in engineering optimization rather than theoretical breakthroughs. Future directions may lie in: dynamic decomposition strategies (adaptive query complexity), multi-modal KG fusion, and larger-scale open-domain verification.