Xiaohongshu Releases SWE-Bench Mobile: When AI Agents Face Codebases of Apps with Hundreds of Millions of Users, the Highest Pass Rate is Only 12%?

Xiaohongshu Releases SWE-Bench Mobile: When AI Agents Face Codebases of Apps with Hundreds of Millions of Users, the Highest Pass Rate is Only 12%?

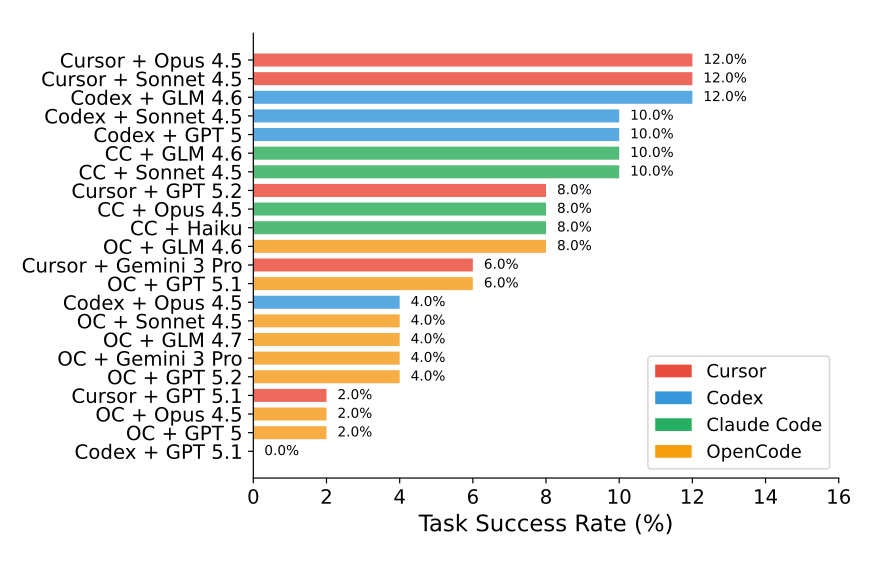

The Xiaohongshu team has released a new benchmark, SWE-Bench Mobile, specifically designed to evaluate the performance of AI Agents on real-world mobile application codebases. The results are thought-provoking: even the top AI Agents have a maximum pass rate of only 12% when facing the codebase of an App with hundreds of millions of users.

What is SWE-Bench Mobile?

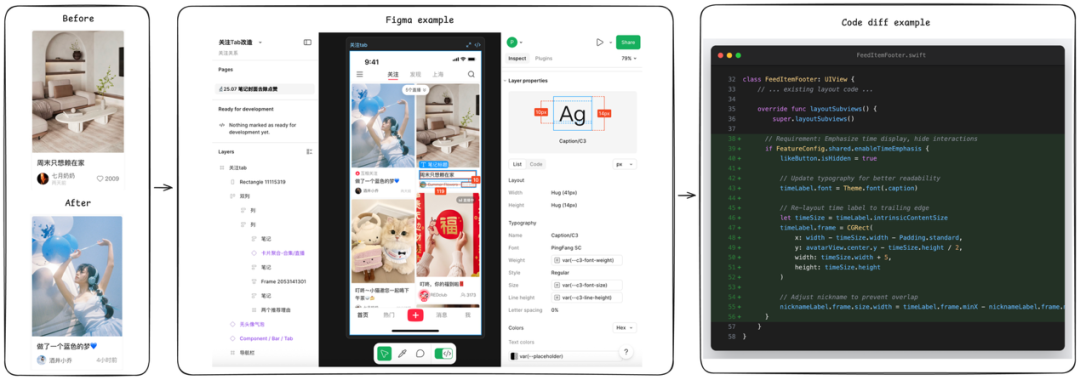

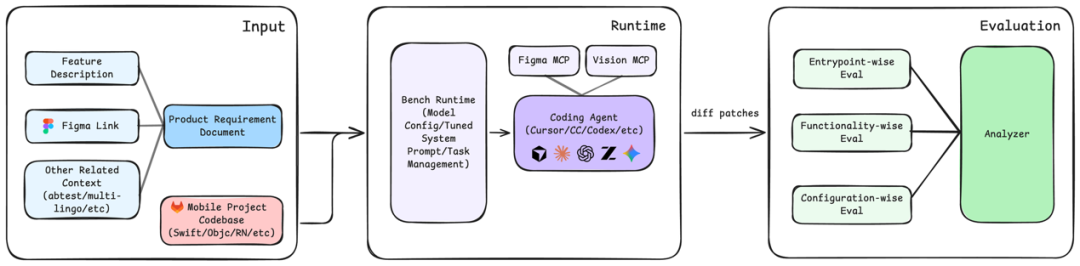

SWE-Bench Mobile is a code repair benchmark for mobile application development. It includes real-world mobile application bug fix tasks, requiring AI Agents to:

- Understand complex mobile application code structures

- Locate the root cause of problems

- Generate correct fix code

- Ensure that fixes do not introduce new problems

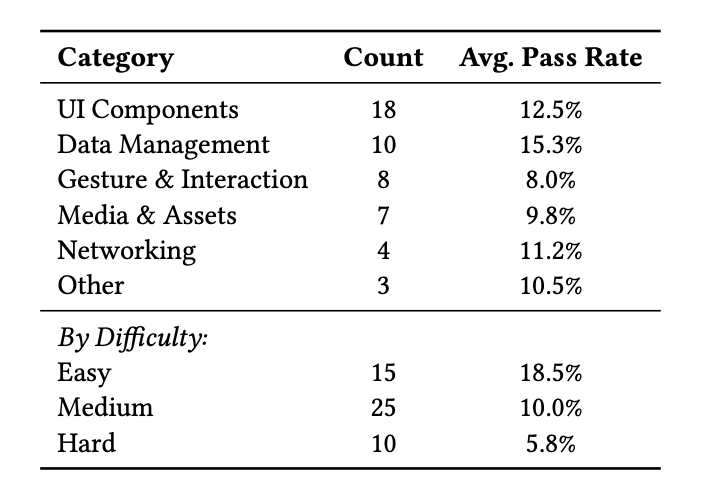

Test Results

In the test, the performance of several mainstream AI Agents was as follows:

- Best Performance: 12% pass rate

- Average Level: 5-8% pass rate

- Some Models: Close to 0% pass rate

This result is far lower than the performance on the traditional SWE-Bench.

Why is it so difficult?

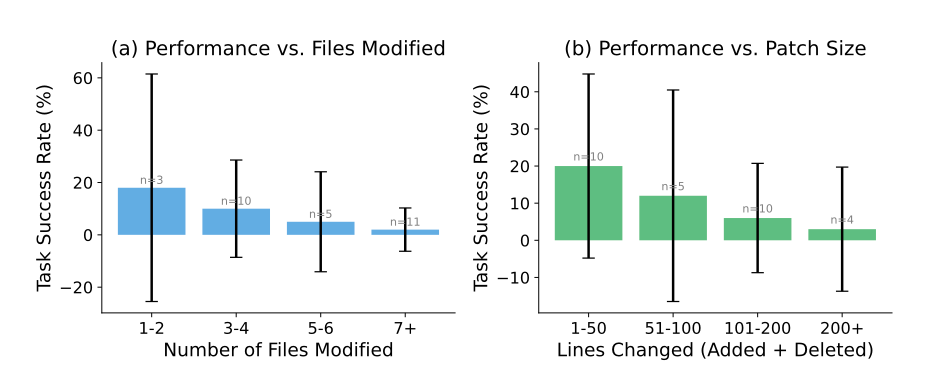

The special nature of mobile application codebases brings additional challenges:

- Multi-Platform Adaptation: Need to consider both iOS and Android platforms simultaneously

- Complex Dependencies: Mobile applications have high coupling between modules

- Performance Constraints: Mobile devices have limited resources, requiring high code optimization

- Complex UI Logic: Interface interaction code is difficult to analyze statically

Comparison with Traditional Benchmarks

Compared to the traditional SWE-Bench, the difficulty of the Mobile version is significantly increased:

- Larger codebase size

- More complex business logic

- More difficult to pass test cases

- Higher context window requirements

Industry Significance

This benchmark reveals the limitations of AI Agents in real-world industrial scenarios. Although AI has made rapid progress in code generation, it still has a long way to go in handling large, complex real-world projects.

Future Outlook

The release of SWE-Bench Mobile provides an important benchmark for the development of AI programming tools. It reminds us that:

- AI-assisted programming still requires human supervision

- Complex projects require more intelligent context understanding

- There is still a lot of room for improvement in model capabilities

Resource Links