Anthropic's $350 Billion Valuation and the OpenClaw Paradox

In mid-February, Anthropic completed a $30 billion funding round, reaching a valuation of $350 billion. This figure exceeds the total market capitalization of India's top ten IT companies (approximately $350 billion, with 1.6 million employees), while Anthropic has only 3,000 employees.

But in the same week, the story of an open-source project called OpenClaw exposed a potential strategic error the company might be making.

The Logic of $30 Billion

Fund flows indicate market expectations. In the FTX bankruptcy liquidation, its Anthropic shares were sold for approximately $1.3 billion. If retained until now, this investment could be worth $20-30 billion.

Investors' logic is simple:

- Technological Leadership: Opus 4.6 scores 40% on the FrontierMath benchmark, essentially on par with GPT-5.2

- Product Momentum: Claude Code's weekly active users have doubled since January, and 4% of GitHub commits already come from Claude Code

- Commercialization Path: Projected to be profitable by 2028, adhering to a product strategy without ads

But valuation is a bet on the future, and the future is full of strategic risks.

OpenClaw Incident: An Analysis of a PR Disaster

Peter Steinberger developed OpenClaw, an open-source AI programming tool based on the Claude API. Anthropic's lawyers issued a cease-and-desist letter, citing the name's similarity to "Claude."

What was the result? Steinberger renamed the project Moltbot and was then acquired by OpenAI, bringing the entire project and community with him.

Consistent comments on X:

"Anthropic really fumbled the bag on the OpenClaw arc" "anthropic lost the opportunity - openclaw + Claude code would be a game changer" "Generational fumble from Anthropic"

This isn't a legal issue; it's a strategic one. OpenClaw's GitHub stars already exceed VS Code, and are three times that of Claude Code. It is an open-source platform, meaning it represents the trust and attention of the independent developer community.

By acquiring OpenClaw, Anthropic could have gained three things:

- Trust of the open-source community

- Cross-platform capabilities (OpenClaw supports Windows, while Claude Code is primarily on macOS)

- Entry point to the developer ecosystem

But Anthropic chose legal means. OpenAI got everything effortlessly.

Two Dimensions of Platform Strategy

From a platform strategy perspective, there are two key observations:

First, Anthropic is repeating Microsoft's mistakes.

In the 2010s, Microsoft was hostile to the open-source community, resulting in the loss of mindshare among an entire generation of developers. Later, Satya Nadella gradually repaired the relationship by acquiring GitHub, embracing Linux, and supporting open source.

Anthropic's handling of OpenClaw is reminiscent of Microsoft in that era—using the legal team to solve problems that should have been addressed by the strategy team.

Second, controlling the tool layer controls the user entry point.

OpenAI's acquisition of OpenClaw is not about technology; it's about a developer aggregation platform. When the model itself becomes a commoditized commodity, whoever controls the tool layer controls the user entry point.

Claude Code's strategy is deep understanding—reading the code before acting. Codex's strategy is application first, model iteration, and acquisition integration. There is no right or wrong path, but only one is actively expanding the ecosystem boundary.## The Pentagon's Paradox

In the same week, another story surfaced: the Pentagon is considering severing ties with Anthropic because Anthropic insists on imposing restrictions on the military use of its AI models.

On the surface, this is a matter of values—Anthropic was created by the former OpenAI safety team, and AI safety is its core DNA. But from a strategic perspective, this is a boundary setting issue.

If Anthropic insists on restricting military use:

- Lose government contract revenue

- May lose research cooperation opportunities with the Pentagon

- But maintain the brand positioning of AI safety

If Anthropic is completely open:

- Obtain government revenue

- But may deviate from its safety mission

- Trigger ethical dilemmas within the internal team

There is no perfect answer. But it is worth noting that the same Anthropic uses legal means against open-source developers, but insists on principles for military use. This inconsistency may create greater brand risks in the future.

CEO's Prophecy and the Paradox of Hiring

Dario Amodei has stated on multiple public occasions that software engineering will be "completely replaced" by AI within 6-12 months.

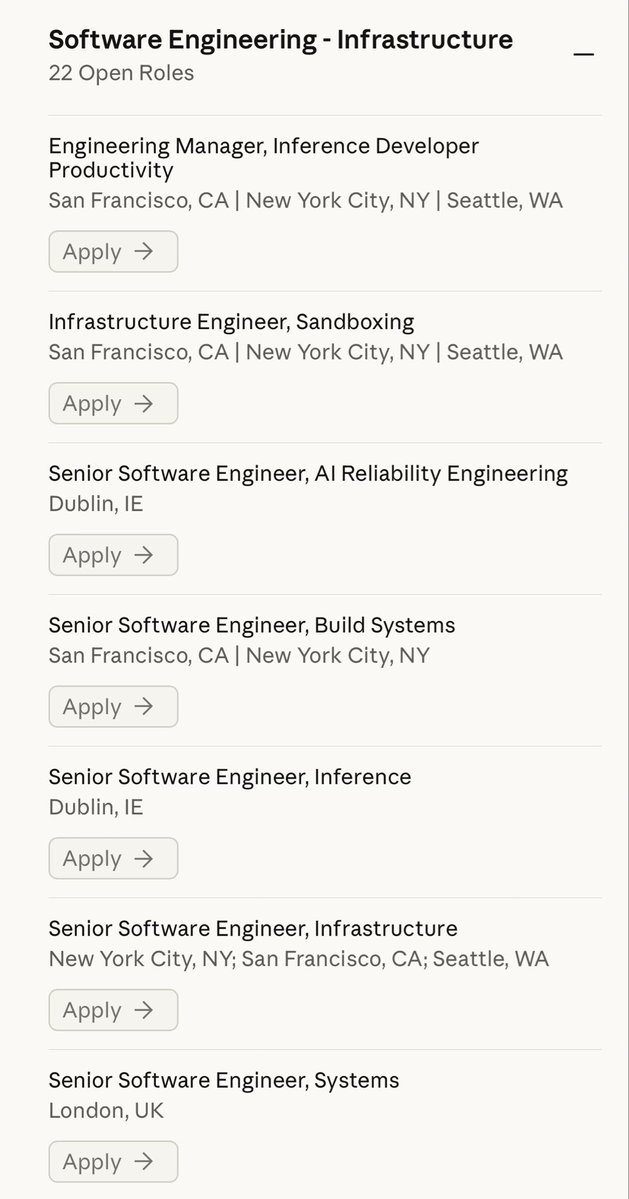

Ironically, Anthropic is still hiring a large number of software engineers at the same time.

Comments on X:

"At this point, I'm sure the Anthropic CEO is deliberately pushing the 'developers are becoming obsolete' narrative just to grab attention on X."

This is not a simple contradiction. Amodei's prediction may be sincere—but a sincere prediction is not equal to a correct prediction. More importantly, this statement is alienating Anthropic's most core user group: developers.

When your CEO says that a certain profession will disappear within a year, and this profession happens to be the main user of your product, the cost of this public relations strategy may be higher than imagined.

Technical Capabilities and Strategic Blind Spots

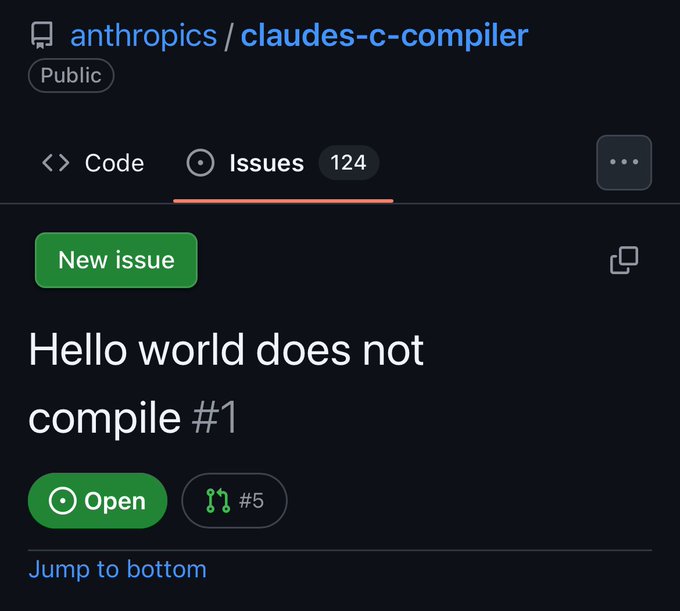

Anthropic's technical capabilities are real. 16 Claude Agents wrote a 100,000-line Rust compiler from scratch that can compile the Linux kernel. This is the result of 2000 sessions and $20,000 in API fees.

But technical leadership does not equal strategic correctness. History is full of cases of technical leadership but strategic errors:

- Netscape had the best browser, but lost to Microsoft's bundling strategy

- BlackBerry had the best enterprise phone, but lost to the iPhone's ecosystem

- Yahoo had the best directory, but lost to Google's search algorithm

Anthropic is now in a position similar to Netscape in 1996—technically leading, but competitors are surrounding it from multiple directions.

The Bottom Line

Anthropic's $35 billion valuation is built on an assumption: technical leadership can translate into a sustainable competitive advantage.

But the OpenClaw incident exposed the fragility of this assumption. When model capabilities become homogeneous, the developer ecosystem will become the only moat. In ecosystem construction, Anthropic is making strategic mistakes:

- Treat the open-source community with legal means rather than strategic cooperation

- Alienate core user groups with sensational remarks

- Let competitors win without a fight in the platform war

$30 billion buys the future. But if the strategic direction is wrong, no amount of money can make up for the loss of mind share.For developers and investors, this is a question worth considering: In the platform war of AI, are you betting on technical capabilities or ecological strategy?